The AI-Generated Art Revolution

Just a few years ago, if you wanted a machine to generate an image for you, you'd need a big data set of the same kind of image. Want to create the image of a human face? You can use a GAN—a generative adversarial network—to generate photorealistic portraits of people who do not exist.

But what if you want to generate an image straight from your imagination? You don't have any data. What you want is to just describe something, in plain language, and have a machine paint the picture.

This is now possible. The technique is called prompt-based image generation, or simply "text-to-image". Its main trick, in a nutshell, is a neural network trained on a vast collection of images with captions. This becomes a Rosetta stone for translating between written language and visual imagery. So when you say "a foggy day in San Francisco" the machine treats your description as a caption and can tell how well any given image fits it. Pair this translator with an image-generating system, such as a GAN, and you have an AI artist ready for your orders.

It was only a matter of months before an online community of AI art hackers emerged. Twitter has become an open gallery of bizarre and wonderful AI-generated images.

Join the revolution

Want to know more? Join me for a panel discussion with four people who are deeply involved with this AI art revolution.

ML Show & Tell: The incredible, bizarre world of AI-generated Art

Now on Demand

Aaron Hertzmann is a Principal Scientist at Adobe, Inc., and an Affiliate Professor at University of Washington. He received a BA in Computer Science and Art & Art History from Rice University in 1996, and a PhD in Computer Science from New York University in 2001. He was a professor at the University of Toronto for 10 years, and has worked at Pixar Animation Studios and Microsoft Research. He has published over 100 papers in computer graphics, computer vision, machine learning, robotics, human-computer interaction, perception, and art. He is an ACM Fellow and an IEEE Fellow.

Bokar N’Diaye is a graduate student in the University of Geneva (Switzerland). Currently studying for a master’s degree of anthropology of religions, his interests lie in the intersection of humanities and the digital. Mostly active on Twitter, where he illustrates the computer-generated scriptures of Travis DeShazo (@images_ai), he also collaborates with the Swiss collective infolipo on an upcoming project, Cybhorn, combining music, technology and generative text.

Hannah Johnston is a designer and artist, with an interest in new technologies for creative applications. Following a Bachelor's in information Technology and a Master's in Information Systems Science, Hannah spent 9 years designing user experiences at Google. She currently works as a post-secondary instructor and design consultant.

Ryan is a Machine Learning Engineer/Researcher at Adobe with a focus on multimodal image editing. He has been creating generative art using machine learning for years, but is most known for his recent work with CLIP for text-to-image systems. With a Bachelor’s in Psychology from the University of Utah, he is largely self-taught.

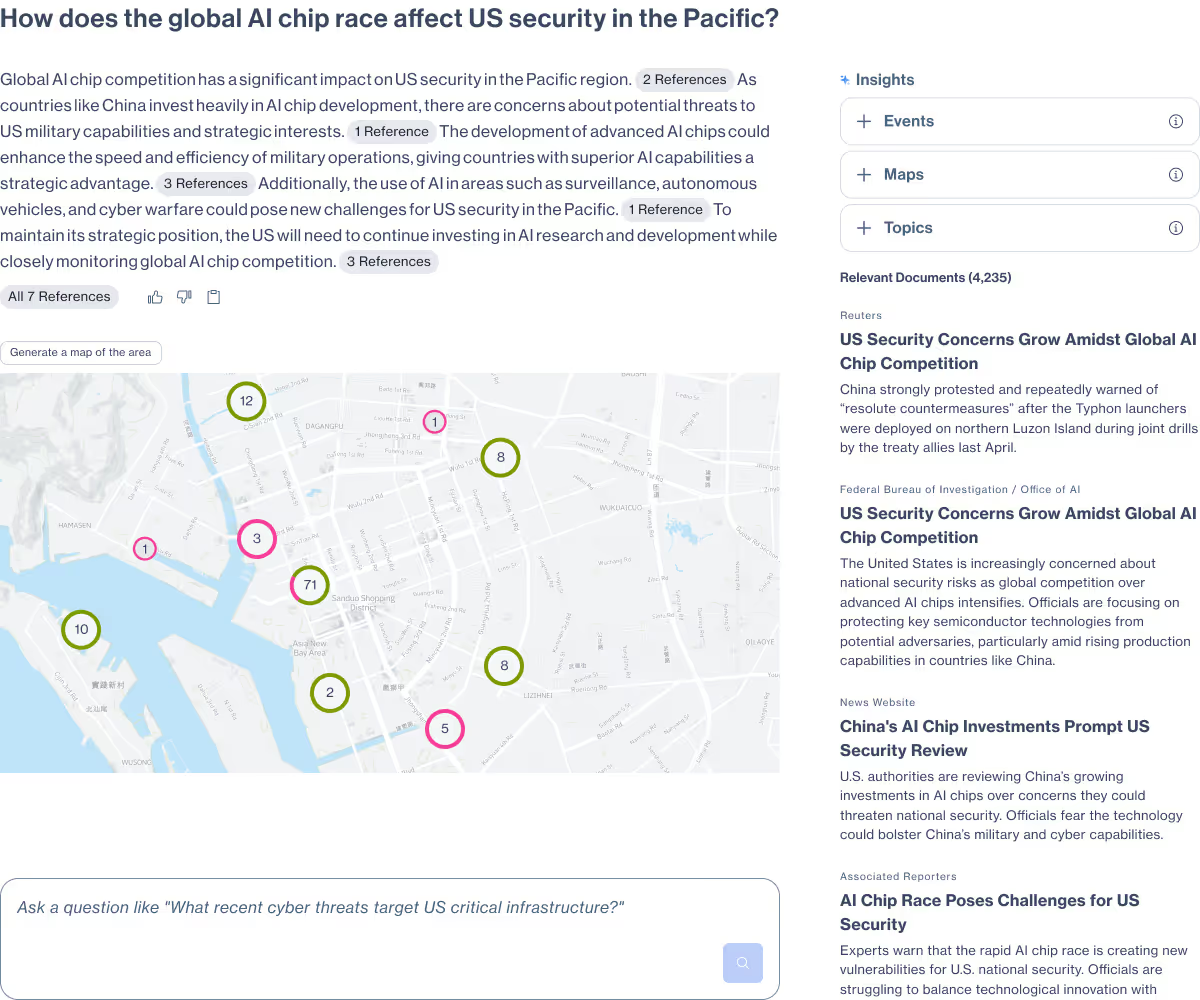

Primer Enterprise

Informed, defensible analysis

Primer Enterprise is a secure AI platform that helps analysts and mission teams across the Intelligence Community, Defense, and Civilian agencies analyze massive volumes of unstructured data. It transforms fragmented reports, proprietary data, and open-source information into structured, traceable insight that supports faster, defensible decision-making.

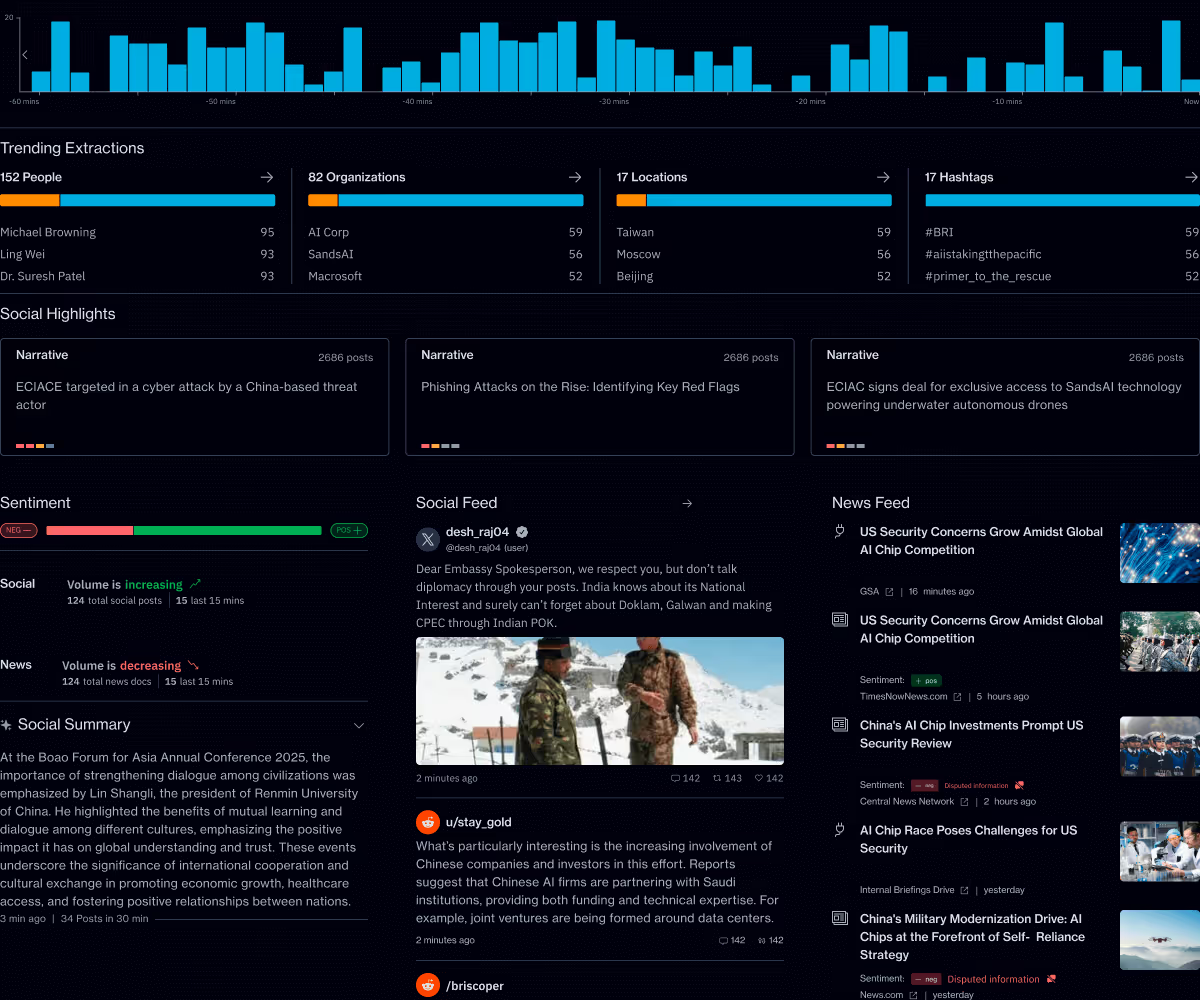

Primer Command

Real-time operational clarity

Primer Command is an AI-powered monitoring platform that helps mission teams keep track of narratives, track evolving topics, and detect emerging threats across global news and social media. It provides real-time visibility into the information environment so leaders can understand events as they unfold.