5 Predictions for NLP

NLP is experiencing a breakthrough moment. Its use cases, the technologies that fuel it, and the sophistication of the models have evolved and grown exponentially in the last several years. Read on for Primer CEO Sean Gourley’s five predictions why NLP has a bright future, especially in 2022.

We're in the golden age of technological advancement, especially when it comes to natural language processing (NLP). There are constant breakthroughs for new neural networks, new training approaches, and new ways to scale these models as they deploy. With the increase of information and data, NLP will be a crucial tool for both government organizations and commercial businesses in 2022.

From use cases across industries to interacting with human intelligence, Primer CEO Sean Gourley shares his predictions for how NLP is shaping the information landscape this year.

1. More industries will be leveraging NLP

The commercial space is increasingly adopting NLP solutions in a variety of industry sectors. NLP is now being used to track consumer behavior trends to better meet customer demands. It is also being used to generate internal knowledge bases for internal data.

NLP helps decision-makers across industries cope with information overload. Without it, their competitive advantage is threatened. In the banking sector, analysts have successfully used NLP to analyze unstructured data to perform risk analyses, uncover new investment opportunities, and keep a pulse on current events that move markets.

“While building and fine-tuning our technology for mission-critical applications in the public sector, we quickly learned that workflows are very similar for our commercial customers. Our models are directly applicable to a wide variety of industries and use cases in the private sector, and very beneficial in situations where people require models with human-level accuracy and performance," said Gourley.

2. Humans and machines will work together for long-term success

Machines can outperform humans both in speed and processing power, processing information in nanoseconds. In high-stakes business and operational situations, speed is a huge advantage. What's more, machines can process at scale. Some decisions, like specific and bounded tasks, can be automated and made by computers to make our lives easier.

"In terms of speed and scale, humans are massively outcompeted by machines by many orders of magnitude," said Gourley.

In high-stakes environments, decisions need to be made by humans. In today’s world, most decisions have both a machine and a human component, complementing each other - the machine for processing and speed, and the human for critical thinking. Suppose you can combine machines' speed and processing level with human-level precision and critical thinking. In that case, you can create a new, better intelligence system that can stand the test of time and new technologies.

"It's up to us to think about how to construct human and machine systems to best take advantage of the intelligence and skillsets of the workforce," said Gourley.

3. Humans will bring NLP models closer to self-supervision

Right now, the holy grail of AI is self-supervision - where machines can effectively operate independently. In NLP, model self-supervision happens alongside building foundational transformer networks. Depending on the function, supervision by humans is needed for it to work correctly. For instance, predictive text can be self-supervised while speech-to-text still requires human oversight.

While end-to-end self-supervision doesn't yet exist for all NLP functions, there are opportunities to expand it by adding a human supervision layer. This expansion will come from investing in humans training these NLP systems, transferring their subject matter expertise into the tool to create a better solution.

"If we invest in humans and put training data into the systems, we will see the NLP models perform as we intended them to," said Gourley.

4. More information = more information manipulation

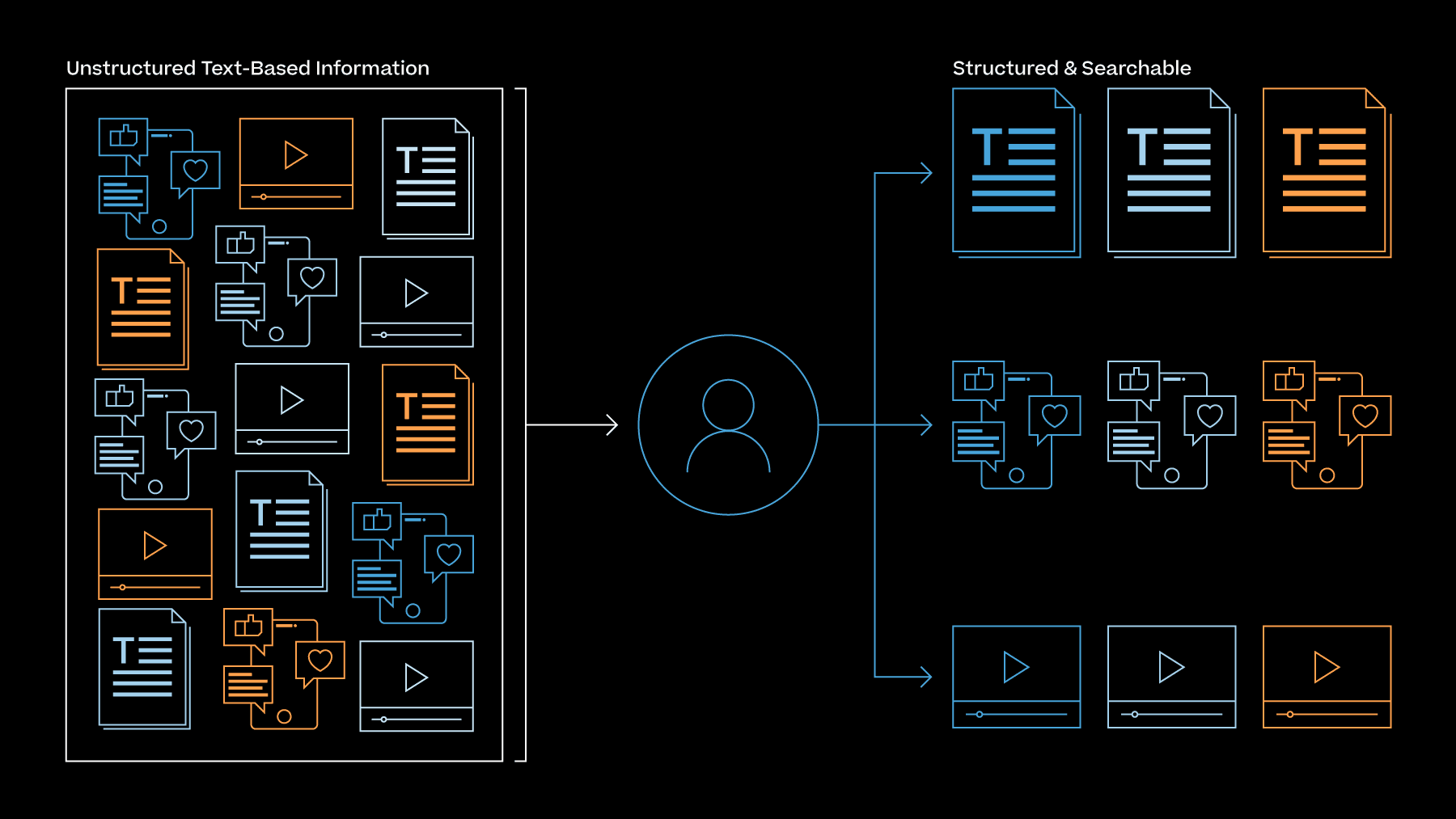

Information is growing exponentially, often unclassified and open-source, and compiled from worldwide sources. To be useful, that information needs to be ingested and integrated, alongside classified information, into an intelligence process.

However, the abundance of information and recent advances in AI technologies, makes it easier for people to manipulate perspectives, push agendas, and emphasize certain viewpoints over others. As a result, we find ourselves in a world where our public information space now resembles an information battlefield. NLP models can help detect disputed claims and potential disinformation, revealing information manipulation patterns that can find their way into our decision-making.

"We're going to see more information warfare, to corrupt signals, and to manipulate perspectives and narratives," said Gourley.

5. AI components are more valuable when integrated

To build and deploy effective AI models, you need to move back and forth through different states: data ingestion, labeling, and deployment. Today these functions are supported by various tools, each with their own experts driving forward a single piece in the entire value chain. The constant hand-off and siloed, disconnected nature of each AI component makes building AI incredibly hard and a lengthy process. Ideally, these functions and people are supported by a single interface that can help builders and users collaborate and analyze every bit of data inside your organization in a cost-effective manner.

That said, while each AI component is vital on its own, they are more valuable when they are connected. In other words, successful NLP models leverage the strengths of each AI component used to build it. The result is an advanced NLP model that reveals a clearer picture and provides more context to the analyst synthesizing the information.

"As we continue to build NLP models, it's going to be about connecting different AI components to build valuable pipelines and workflows," said Gourley.

Learn more about Primer's industrial-grade NLP solutions or how you can build models yourself with our NLP Platform to quickly explore and utilize exponentially growing text-based information.

Contact Primer here for more information about Primer and access product demos.

Primer Enterprise

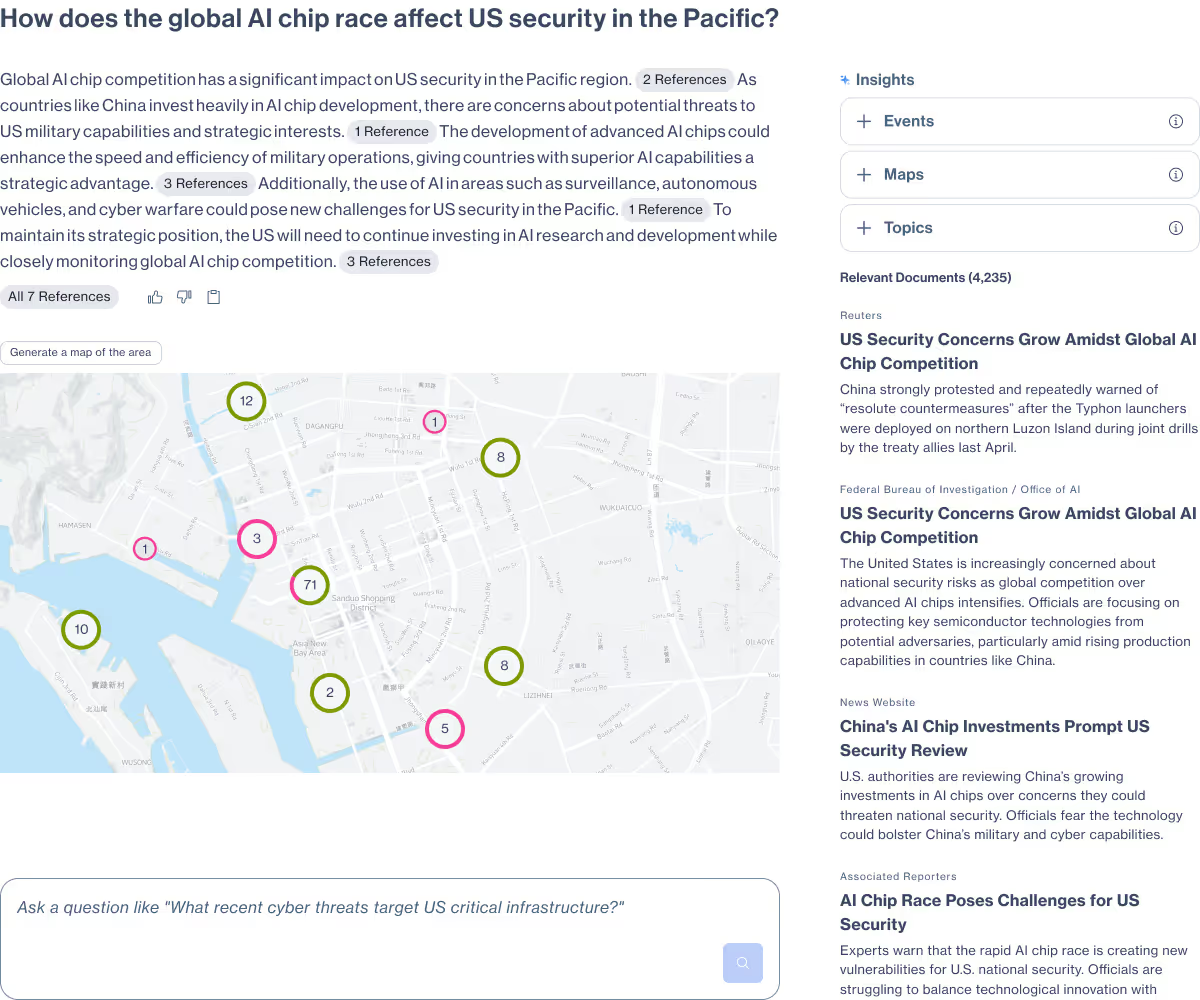

Informed, defensible analysis

Primer Enterprise is a secure AI platform that helps analysts and mission teams across the Intelligence Community, Defense, and Civilian agencies analyze massive volumes of unstructured data. It transforms fragmented reports, proprietary data, and open-source information into structured, traceable insight that supports faster, defensible decision-making.

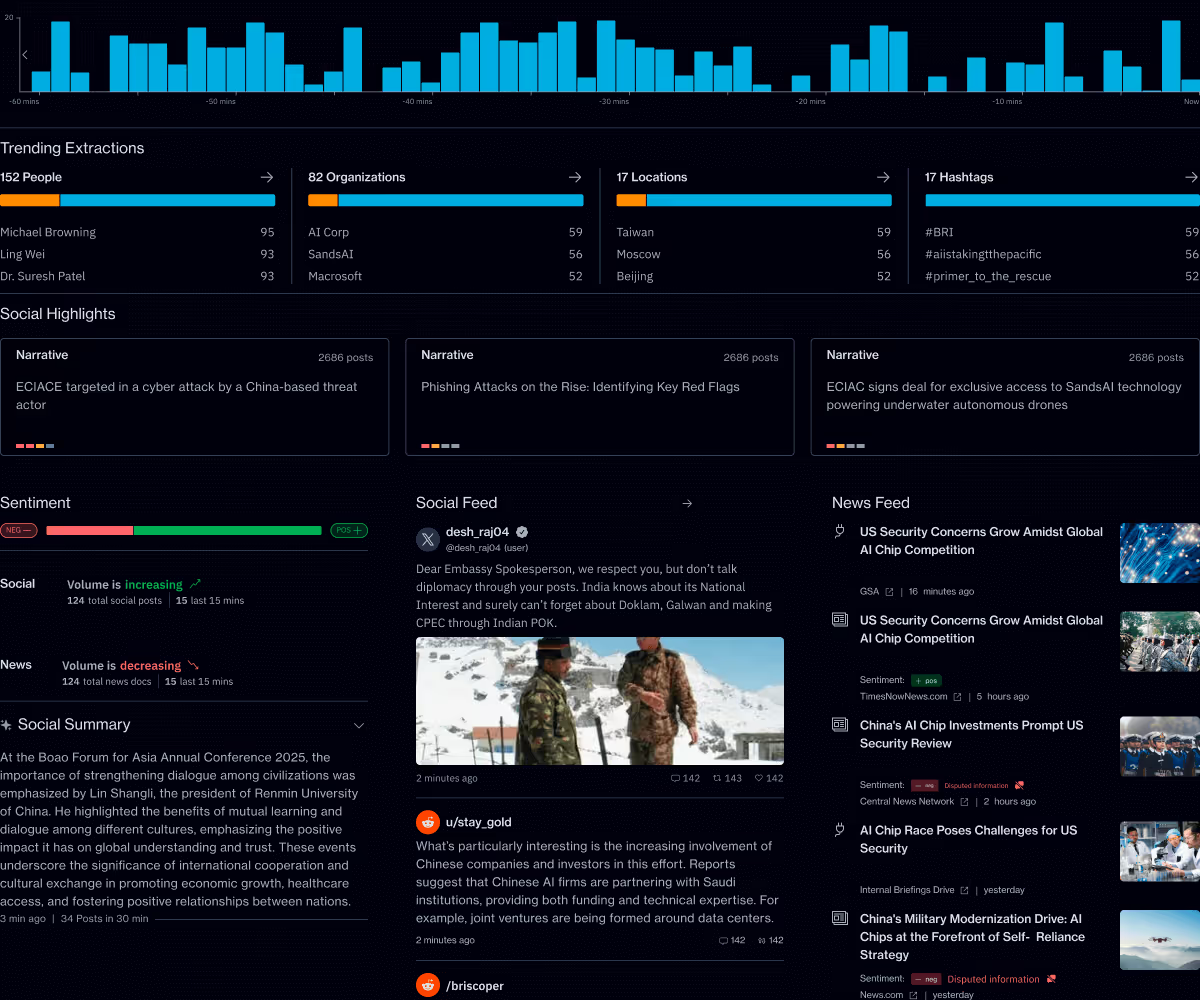

Primer Command

Real-time operational clarity

Primer Command is an AI-powered monitoring platform that helps mission teams keep track of narratives, track evolving topics, and detect emerging threats across global news and social media. It provides real-time visibility into the information environment so leaders can understand events as they unfold.