How Primer Built an Inference Triage Process Called BabyBear to Save GPU Time

BabyBear cuts GPU time and money

on large transformer models

When you're processing millions of documents with dozens of deep learning models, things add up fast. There's the environmental cost of electricity to run those hungry models. There's the latency cost as your customers wait for results. And of course there's the bottom line: the immense computational cost of the GPU machines on premises or rented in the cloud.

We figured out a trick here at Primer that cuts those costs way down. We're sharing it now (paper, code) for others to use. It is an algorithmic framework for natural language processing (NLP) that we call BabyBear. For most deep learning NLP tasks, it reduces GPU costs by a third to a half. And for some tasks, the savings are over 90%.

The basic idea is simple: The best way to make a deep learning model cheaper is to not use it at all, when you don't need it. The trick is figuring out when you don't need it. And that's what BabyBear does.

In our algorithm, the expensive deep learning model is called mamabear. As the documents stream in to be processed by mamabear, we place another model upstream: babybear. This model is smaller, faster, and cheaper than mamabear, but also less accurate. For example, babybear might be a classical machine learning model like XGBoost or Random Forest.

The babybear model can be whatever you want—as long as it produces confidence scores along with its predictions. We use the confidence of babybear to determine whether an incoming document requires mamabear. If it's an easy one, babybear handles it and let's mamabear sleep. If it's a difficult one, babybear passes it to mamabear.

How does babybear learn this task? During a "warm up" period, we send the stream of documents to mamabear, just as we would in production without the BabyBear framework. The babybear model directly observes mamabear as an oracle, making its own predictions to learn the skill. Once it has sufficient skill, it gets to work.

For example, we took this open source sentiment analysis model as a mamabear. For the babybear warm up we trained an XGBoost model using 10,000 inferences from mamabear as gold data. With hyperparameter optimization it took 113 minutes. But the babybear had already learned the task sufficiently to get to work after about 2000 examples—less than half an hour of training.

Every NLP practitioner has created keyword filters upstream of expensive models to save unnecessary processing. BabyBear just replaces that human data science work with machine learning.

All that you need to do is tweak one parameter: performance threshold. That determines how much loss in overall f1 score you're willing to pay in return for computational savings. Whatever you set it to—10%, 5%, or 0%—BabyBear will save as much compute as possible by adjusting its own confidence threshold to meet that target.

What I've described so far is the simplest version of BabyBear. It applies to document classification, for example, where babybear is a single model upstream of mamabear and is learning to perform the same task. In our paper, we describe more complicated versions of BabyBear. For NLP tasks such as entity recognition, we achieve greater savings using a circuit of multiple babybear models. And some of those babybear models can be distilled (cheaper, faster) versions of mamabear. But the same algorithm applies.

BabyBear is the framework that we use here at Primer to automate some of the data science we once laboriously did ourselves. For the vast majority of NLP tasks—basically everything not requiring text generation—BabyBear can help prevent needless draining of your wallet and the electrical grid.

Primer Enterprise

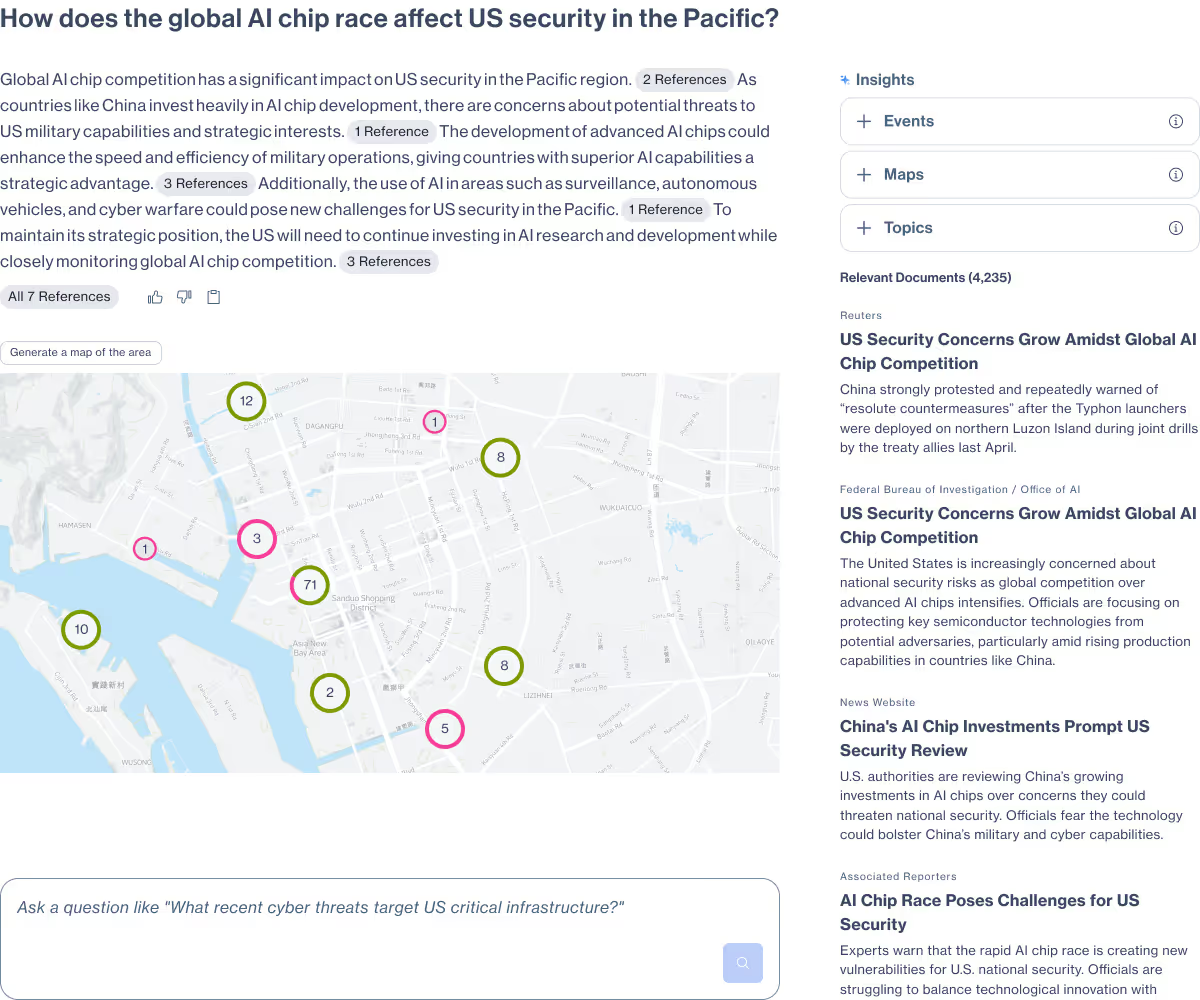

Informed, defensible analysis

Primer Enterprise is a secure AI platform that helps analysts and mission teams across the Intelligence Community, Defense, and Civilian agencies analyze massive volumes of unstructured data. It transforms fragmented reports, proprietary data, and open-source information into structured, traceable insight that supports faster, defensible decision-making.

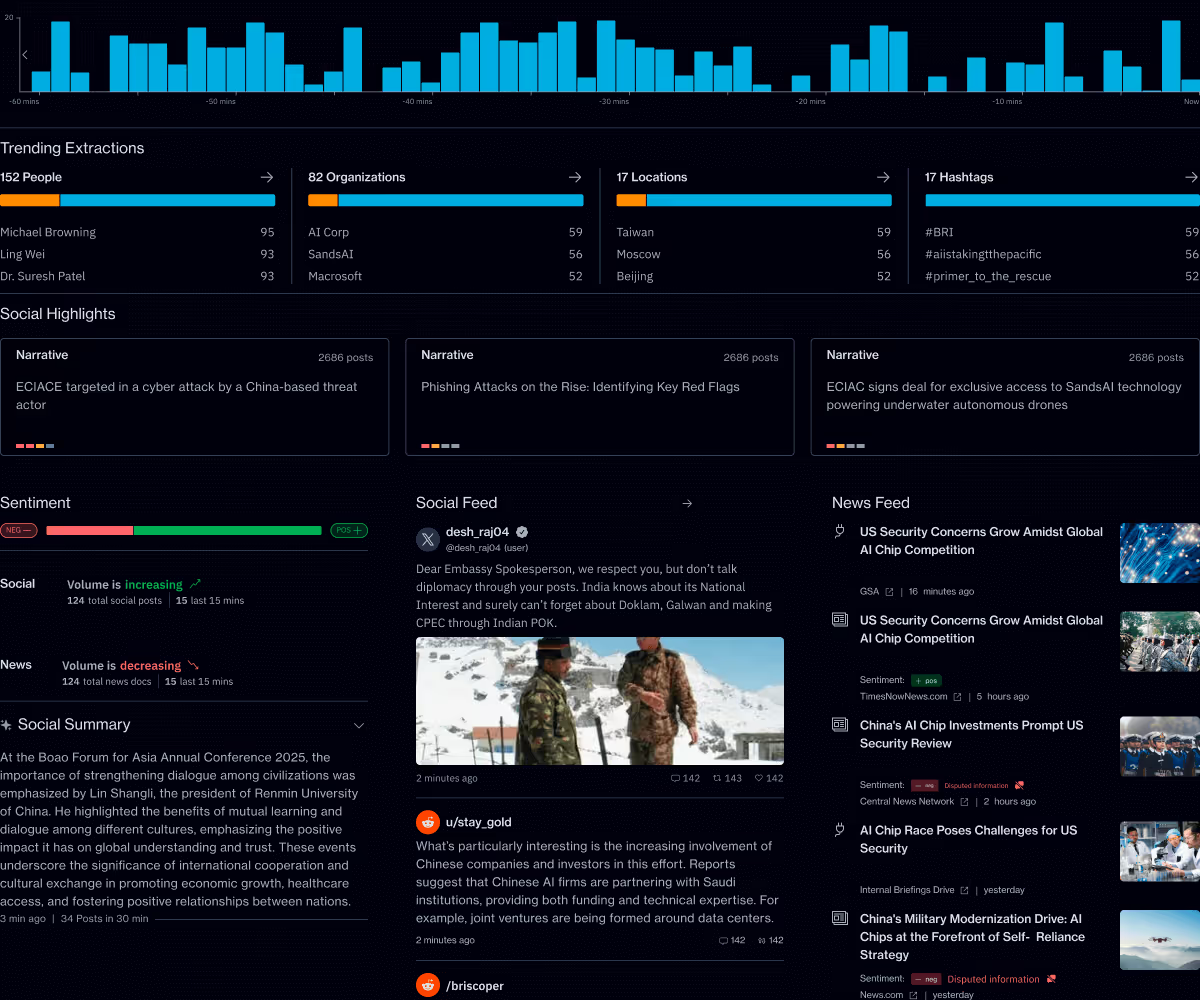

Primer Command

Real-time operational clarity

Primer Command is an AI-powered monitoring platform that helps mission teams keep track of narratives, track evolving topics, and detect emerging threats across global news and social media. It provides real-time visibility into the information environment so leaders can understand events as they unfold.