How Machine Learning Impacts National Security

In early April, Primer’s Senior Director for National Security, Brian Raymond sat down with Cipher Brief COO Brad Christian to discuss how machine learning is impacting national security. What follows is a lightly edited version of the .

In the latest edition of our State Secrets podcast, Cipher Brief COO Brad Christian talks with Brian Raymond, who works for Primer, one of our partners at The Cipher Brief’s Open Source Collection, featured in our M-F daily newsletter.

At Primer, Brian helps lead their national security vertical; in other words, their intelligence and military customers, but also their broader federal practice. That means he’s involved in everything from sales to advising on product development and overseeing current customer engagements.

The Cipher Brief: Let’s dive into these emerging technologies and how they relate to national security. We’re talking today about machine learning and artificial intelligence. These are terms becoming more talked about and prominent in the news. But frankly, most people still don’t have a good grasp of what they mean. Can you give us a high-level perspective on what it means when we hear the terms’ artificial intelligence’ and ‘machine learning’, and why do I need to care about them?

Raymond: That’s a great question, and maybe I’ll just back up a little bit, in terms of what sparked my interest when I joined Primer and why this field is so exciting. Previously I was at the CIA, primarily as a political analyst, as well as having served in several additional roles. From the CIA, I went to the white house. I served as a country director from 2014 to 2015 and was able to see the intelligence collection process in terms of analysis and decision making from many different angles.

Fast forward to 2018 when I had the opportunity to join Primer. I am not a tech expert by any means. I don’t have a background in machine learning or artificial intelligence. My interest sparked when I saw what machine learning and artificial intelligence could do to accelerate the mission.

At the highest level, machine learning is different from general artificial intelligence in that machine learning is leveraging what’s called a neural net. It replicates in some ways the structure of the brain where you have neurons and synopsis to build very complex models. Hundreds of millions of nodes help automate a process that’s typically done by a human today.

And so, let’s unpack a few practical examples. Most listeners on this podcast are probably familiar with object detection or object recognition. We can feed an image into a particular algorithm and determine, okay, is that a dog, or is that a car? Solving this problem has been the focus for at least 15 years. Now the algorithms are getting really, really good, to the point where they’re fed into self-driving cars and weapon systems.

There are many different areas where the technology is beginning to take hold. Instead of having large teams of humans that are clicking dog or car and sorting different imagery, this can now be done in an automated fashion at scale and speed by algorithms.

That’s one example, and it’s something that took hold and became operationalized. It was injected into workflows about six or eight years ago. There’s still much work in progress, but now it’s being commercialized. It’s becoming increasingly mature.

There are other areas of AI that are a lot thornier, and there has been slower progress. One of those areas is the realm of natural language and human spoken language – think Siri on the phone or Alexa. At the highest level, algorithms intended to help accelerate and augment rote tasks that humans are undertaking to free them up to work on higher-level tasks.

The Cipher Brief: These issues you’re talking about are critical. Not just to make life easier or to make things more efficient, but so that America and the military can maintain its innovative and technological edge. It’s seriously challenged for the first time. In terms of machine learning and natural language processing, what are some of the ways that you see this operationalized in the national security space, and what are some of the things that we should be looking for in the next three to five years?

Raymond: That’s a good question. To respond, I’d probably break it down into three key messages. I’ll unpack each one. The first is that there has been much learning that’s occurring over the last several years. It happened when pairing operators and analysts with algorithms to impact mission. It requires a partnership, and in some cases, an entirely different organizational model that exists within the national security organizations today.

A partnership approach is necessary to use and fully leverage these algorithms.

The second, especially in the world of natural language processing but also more broadly, we’ve seen an absolute explosion in the performance of these algorithms over the past 18 months. The algorithm’s performance has mostly gone under the radar in national news and most of the publications that I and others read.

We’re really in a golden age right now, and a lot of new and exciting use cases are unlocking because of these performance gains.

The third thing I’ll talk a little bit about is that the use cases are becoming more crystallized, especially for natural language algorithms. The three categories are natural language processing, natural language understanding, and natural language generation.

We’re teaching algorithms to not only be able to identify people, places, and organizations, but to understand and then also generate new content based on that.

The use cases for that, quite frankly, have been a little mysterious. So, with Primer, we were founded in 2015, before this golden age spawned.

In late 2018 with the release of an algorithm developed by researchers at Google, called BERT, we saw that these algorithms were brittle. They were good at narrow tasks, but it required much training, and there were difficulties when trying to port across to different document types.

With those constraints, it was challenging to find wide channels to play in and to add value for the end-users. Today three use cases transcend all of our national security customers where we’re finding that the algorithms are fantastic and augmenting what humans are already doing.

I’ll just call the first one’ finding needles in a haystack.’

Suppose you are concerned with supply chains, and you have 5,000 suppliers that you care about for some type of complex system that you’re building. These suppliers are distributed globally, and you’re concerned about disruptions to the supply chains or malicious acts, for example.

But how do you monitor news or bad things happening for 5,000 companies? That’s a lot of Google news alerts, for example.

We’re able to train algorithms that continuously scan hundreds of thousands or millions of documents. They can look for instances in which a small supplier may have been subject to a cybersecurity attack or their headquarters burned down, or their CEO is caught in a scandal and immediately cluster articles or reports around that and then surface those for review.

Finding a needle in a haystack problem is being done by large groups of people. They’re not even able to wrap their arms around all of the consumable information.

The second use case is ‘compression and summarization.’

Recently a study looked at analysts that covered mid-tier type countries. Not much is written about countries like Paraguay, for example. In the mid-nineties, you may have had to read about 20,000 words per day to stay up on what’s going on with that particular country.

Fast forward to 2016, so four years ago, and you had to read around 200,000 words per day to stay abreast of developments. And the forecast was that between 2016 and 2025, it was going to increase tenfold, so from 200,000 to 2,000,000 words per day.

Whether you’re covering a country or a particular organization or a company issue, there’s a tremendous amount of available information that is required to stay ahead of developments.

Information is growing at a logarithmic pace, and so you can’t hire your way out of the problem. You need to find ways to compress and summarize all that information, and you do that by pairing analysts or operators with algorithms.

Compression and summarization is the second key area that users and organizations are finding tremendous benefits.

The last use case is what we call ‘breaking the left-screen, right-screen workflow.’

Left-screen right-screen is a broad workflow that existed since the dawn of modern intelligence analysis in World War Two. Analysts read reports that are coming in on their left screen. Then they take insights from those reports or details that are relevant or that they care about and curate them into some type of knowledge graph on the right screen. Today the analyst turns it into an Excel spreadsheet or a Wiki, emails, Word document, or it might become a final report.

We’re getting really, really good as a machine learning community, at automating that jump.

Machine learning can find all the people in these 10,000 documents and then find all the details about these people and then determine how all these people link to one another. It can continuously create new profiles for people that are mentioned, including those who are just popping up, and then show what further information has been discovered.

Natural language processing has the potential to unlock hundreds of thousands of hours of manual curation still done in 2020. We’re finally at a point with the performance of the algorithms where we can begin automating a lot of that work and freeing people up to do what they’re best at, which is being curious, pursuing hunches, and thinking about second or third-order analysis. And so that’s what’s really exciting about where we’re at today.

The Cipher Brief: What do you see in terms of acceptance of these new approaches amongst these organizations? Because we’re still in a time where there’s a disparity amongst skill sets and knowledge and understanding as it relates to not just advanced technology but basic technology in many organizations.

What you’re talking about now is bleeding the line between some of the most advanced technology that’s out there, working with people who may not understand it, or may not be open or accepting of it.

What do you see in terms of how this is being accepted and practically used in organizations where it may come into contact with someone who’s not from a tech background and has to learn how to work with this new technology and trust it, most importantly.

Raymond: That’s a great question. It brought to mind something that Eric Schmidt said a couple of years ago, which was, “The DoD doesn’t have an innovation problem. It has an innovation adoption problem.”

I think there’s much truth to that, but since Mr. Schmidt made the quote, there’ve been some incredibly exciting developments across the IC and DoD. We’ve benefited tremendously through our partnership with In-Q-Tel, which you know originally was the venture capital arm of the CIA and represented the IC and DoD. Through really innovative programs like AFWERX, the Air Force has rapidly identified and integrated technology into its mission.

There’s also work going on with the DIU and the Joint Artificial Intelligence Center. Additional work is going on with the Under Secretary of Defense for Intelligence. We’re witnessing this explosion of activity throughout the space and creating novel and exciting contracting pathways, with vast amounts of money invested in artificial intelligence.

There’s a new sense of urgency that you didn’t see before. Secretary Esper has been continually saying that artificial intelligence is one of the most, if not the top priority for the Department of Defense.

And we’ve seen that reflected in the spending budget this year. We also see it in the posture of organizations that are recognizing the need to innovate. We see prioritization and pathways and funding.

The challenge with all of this is that although algorithms and learning solutions reside in the realm of SaaS solutions, they’re fundamentally different from buying the Microsoft Office Suite and getting it loaded onto your computer and then using it.

I’d like to share an anecdote. At Primer, we have an office in DC, but we also have our primary offices in San Francisco’s financial district. Every single day we look out the window and see dozens if not hundreds of self-driving cars pass by our building. Different types of electronics are mounted to the rooftop. But you also see people behind the wheel which means some type of training is going on.

Tesla and almost all the major auto manufacturers have “self-driving” cars, but it’s under limited circumstances, in specific conditions. The reality is that there is just an enormous amount of training that is required still in that realm of self-driving vehicles to make it a consumer product.

Within that context, when the IC and DoD deploy object and natural language algorithms to the hardest of the hard problems they grapple with, they have to do it at speed. Organizations fundamentally need to be reconfigured in some cases to make maximum use of machine learning solutions

What that means is the need to train often in the expertise needed to train the models. Models are only as good as the training and the training data that they receive. Usually the expertise resides in classified networks and in the heads of officers, analysts, and operators engaged in this every day.

It requires continuous engagement because serious questions arise when training models to perform specific tasks.

- Who in the organization owns the model?

- Who is in charge of updating the model and reviewing training data that’s produced across the organization?

- Who integrates it?

- How should the organization think about it?

And then finally, where do we really want to go in terms of what tasks are going to get us the most bang for the buck early on?

Almost 80% or 85% of commercial AI innovation initiatives have not delivered what the folks initially thought they would. We believe that that number will come down as average performance increases and as there is learning that occurs both on the customer side and on the company side. However, these are still relatively early days, and these are complicated technologies to leverage effectively. It requires a tight partnership from the top down in these organizations to make it a success.

The Cipher Brief: What’s your estimate on when we’ll see this, adopted, and we’re comfortable with it and its part of our everyday life in the national security community?

Raymond: That’s a great question. I think soon. There’s one thing we haven’t talked about. Cloud infrastructure is being put in place that will unlock a lot of opportunities.

The JEDI contract with DoD has been in the headlines recently. Having a common cloud infrastructure entails computing power, and the ability to move data across enclaves easily is essential for operationalizing machine learning solutions at scale.

Getting that foundation in place will unleash much innovation in different areas.

Coming back to the topic of performance gains, for example, and the task of finding people or locations or organizations in documents. If you hire and train a group of people and have each go through a thousand documents and then ask them to find all the people, places, and organizations across all those documents, usually around 95% precision is typical for humans.

Humans will miss some or may not realize that two different people’s names are spelled differently in the document, for instance. We’re at the point where we’re approaching 96%-97% precision for a number of these tasks, and that’s just in the past six months.

These gains where we’re at or above human-level performance on specific tasks, will gain traction quite quickly.

Finally, the workflows for how we integrate this at scale, for these organizations have started to clarify as well. And that’s this concept of, we call it CBAR, but you’ve got to connect to the data sources first. This common cloud infrastructure is going to unlock more opportunities. We see a lot of really cool and exciting innovation going on there on the connecting side.

You have to connect and then build the models – lightweight, straightforward user interfaces for training the models exist and are in use today.

You have to unleash these models on the data, analyze it, and then inject it into workflows. That’s stylizing as well, and then you can feed the insights back out into whatever products or systems the end-user cares about through APIs or various reporting mechanisms.

A couple of years ago, the connect, build, analyze, report, flow, was not clear. It needed to be architected. It’s now going to be the standard. And, with the infrastructure coming into place and the learning that is occurring with these organizations, I think we’re going to witness a virtuous cycle for innovation.

The Cipher Brief: Any final thoughts? If you had to give one takeaway for our folks, these organizations, the national security community that you’re talking about just from this conversation, what would it be?

Raymond: The overarching message that I would communicate is commitment. These initiatives, whether or not it’s in the natural language domain where Primer is playing or other machine learning domains, they require an investment of an organization and a commitment to make use of it.

And it’s just a fundamentally different problem set than many other, technological solutions, whether or not they’re hardware or software solutions that we’ve seen over the past couple of decades. And that’s a risky endeavor.

But, as you mentioned earlier, our competition, the Chinese, the Russians, the Iranians, and others are making significant investments in these areas. They are vertically integrating, and we’ve always taken a different approach than that. And what we see here is just incredible innovation coming out of the technology sector.

Many companies are incredibly eager to do business with the IC and DOD and contribute to their missions. The level of technological maturity is reaching a point where numerous opportunities have been unlocked that didn’t exist for them even in 2019 or 2018.

Read more expert-driven national security insights, analysis and opinion in The Cipher Brief.

THE AUTHOR IS BRIAN RAYMOND

Brian joined Primer after having worked in investment banking and today helps lead Primer’s National Security Group. While in government, Brian served as the Iraq Country Directory on the National Security Council. There he worked as an advisor to the President, Vice President, and National Security Advisor on foreign policy issues regarding Iraq, ISIS, and the Middle East. Brian also worked as an intelligence officer at the Central Intelligence Agency. During his tenure at CIA, he drafted assessments for the President and other senior US officials and served as a daily intelligence briefer for the White House and State Department. He also completed two war zone tours in support of counterterrorism operations in the field. Brian earned his MBA from Dartmouth’s Tuck School of Business and his MA/BA in Political Science from the University of California, Davis.

Learn more about The Cipher Brief’s Network.

Primer Enterprise

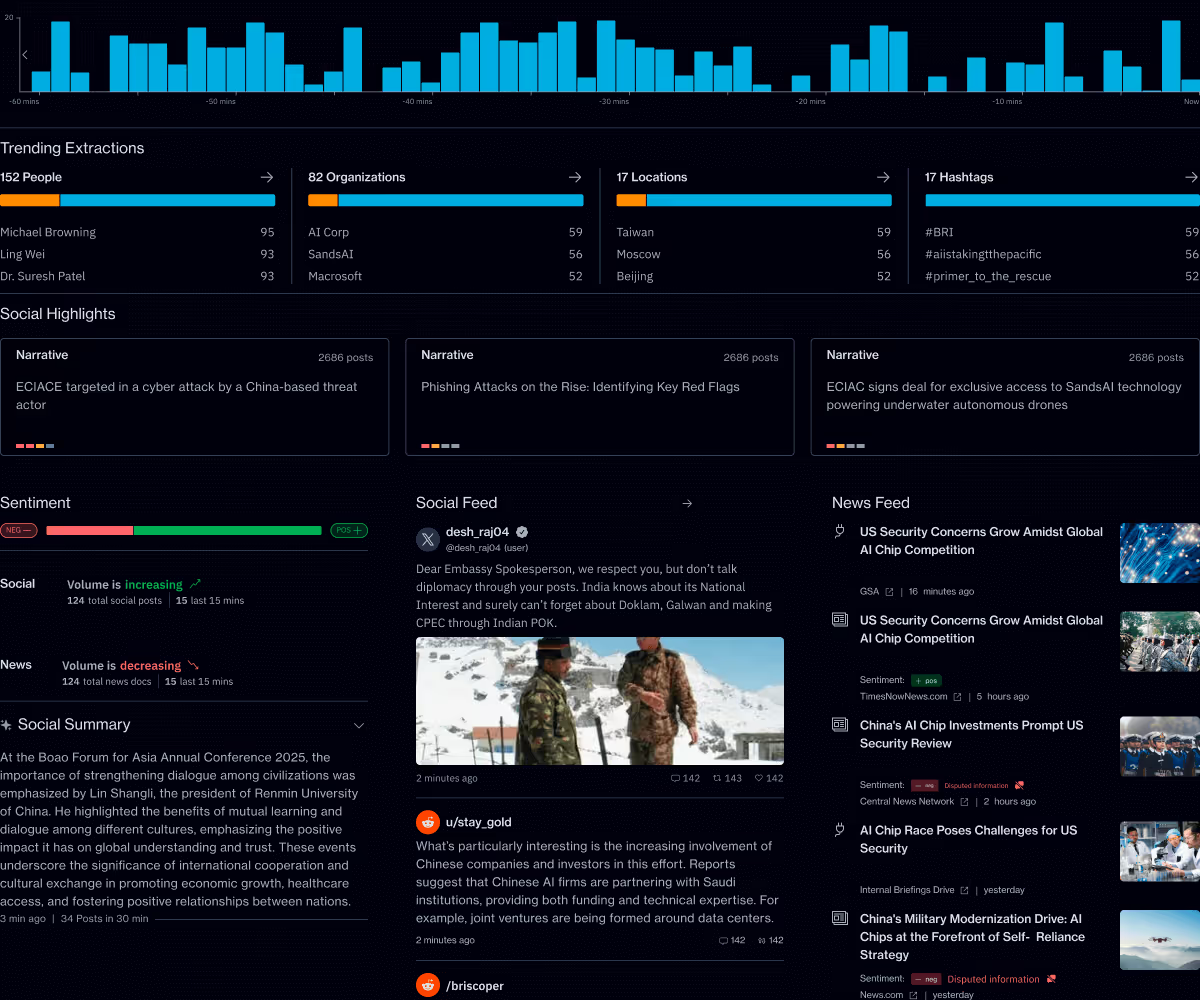

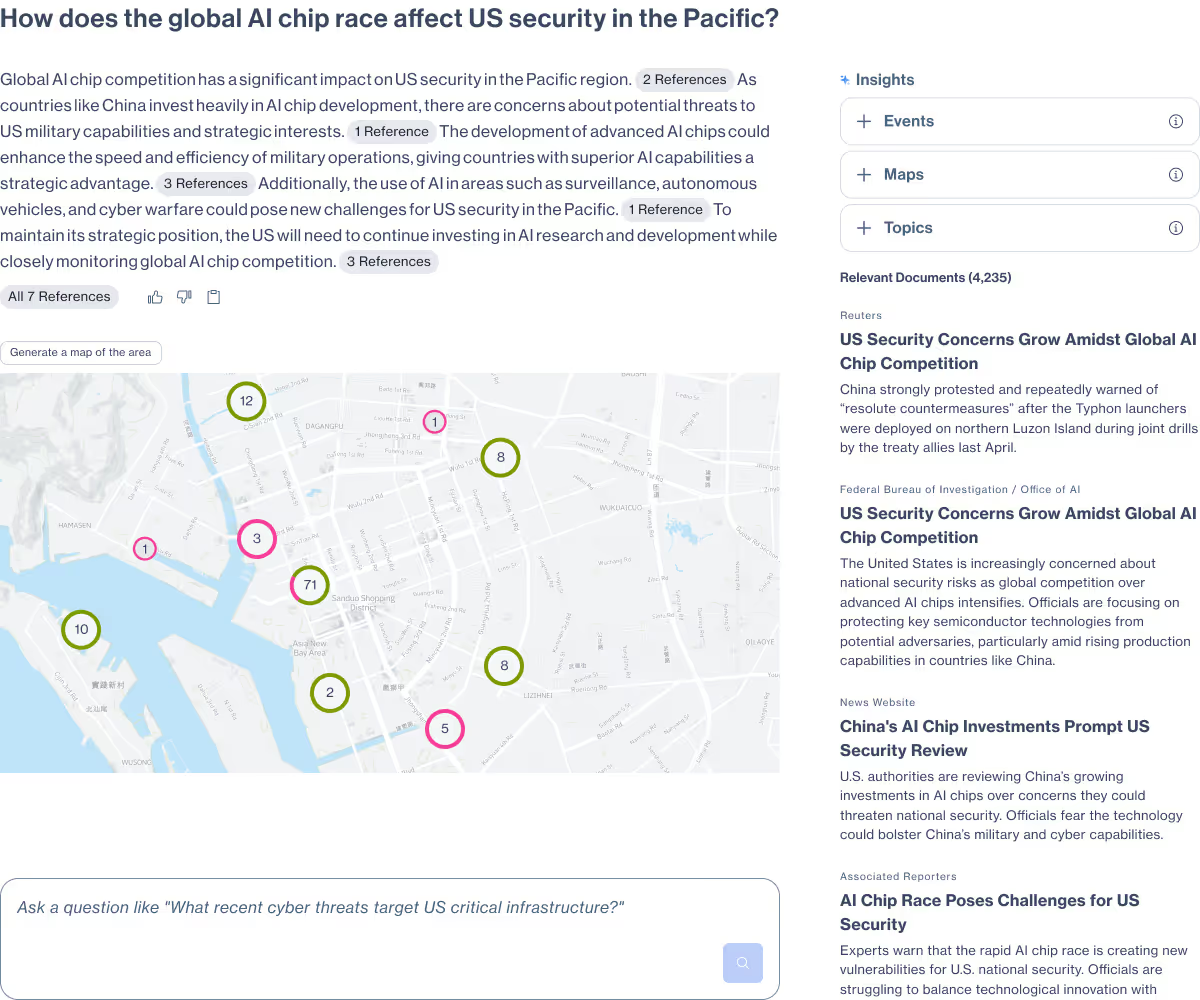

Informed, defensible analysis

Primer Enterprise is a secure AI platform that helps analysts and mission teams across the Intelligence Community, Defense, and Civilian agencies analyze massive volumes of unstructured data. It transforms fragmented reports, proprietary data, and open-source information into structured, traceable insight that supports faster, defensible decision-making.

Primer Command

Real-time operational clarity

Primer Command is an AI-powered monitoring platform that helps mission teams keep track of narratives, track evolving topics, and detect emerging threats across global news and social media. It provides real-time visibility into the information environment so leaders can understand events as they unfold.