The NLP Revolution Is Dead, Long Live NLP!

The NLP revolution kicked off in 2018 when BERT dropped. In 2019 and 2020, it was one mind-bending breakthrough after another. Generative language models began writing long-form text on demand with human-level fluency. Our benchmarks of machine intelligence — SNLI, SQuAD, GLUE — got clobbered. Many wondered if we were measuring the wrong things with the wrong data. (Spoiler: yes.) Suddenly, we no longer needed tens of thousands of training examples to get decent performance on an NLP task. We could pull it off with mere hundreds. And for some NLP tasks, no training data was needed at all. Zero-shot was suddenly a thing.

Over the past year, NLP has cooled down. Transformer architecture, self-supervised pre-training on internet text — it seems like this paradigm is here to stay. Welcome to the normal science phase of NLP. So, what now?

Time to industrialize

Efficiency, efficiency, efficiency, and more efficiency. The cost of training and serving giant language models is outstripping Moore's law. So far, the bigger the model, the better the performance. But if you can't fine-tune these models on fresh data for new NLP tasks, then they are only as good as your prompt engineering. (And trust me, prompt "engineering" is more art than science.) The focus on efficiency is all about industrializing NLP.

The NLP benchmarks crisis is another sign that we're in the normal science phase. Here's an open dirty secret: The purpose of today's NLP benchmarks is to publish papers, not to make progress toward practical NLP. We are getting newer, better benchmarks — Big-Bench, MMMLU — but we likely need an entirely different approach. The Dynabench effort is an interesting twist. But until we stop using performance metrics known to be broken — looking at you, ROUGE — industrial NLP will be harder to achieve.

Normal science is applied science. We are moving from "Wow, look at the impressively fluent garbage text my model generates!" to "Wow, I just solved a million-dollar NLP problem for a customer in 2 sprints!"

NLP is eating ML

But meanwhile, another revolution is brewing on the edges of NLP. The phrase "Software is eating the world" became "ML is eating software." Now it looks like "NLP is eating ML," starting with computer vision.

The first nibble was DALL-E. Remember when you first saw those AI-generated avocado armchairs? That was cool. But then along came CLIP+VQGAN. And just last month, GLIDE made it possible to iteratively edit images generated by text prompts with yet more text prompts. This could be the future of Photoshop.

The trend is the merging of cognitive modalities into a single latent space. Today, it's language and photos. Soon, it will be language and video. Why not joint representation of language, vision, and bodily movement? Imagine generating a dancing robot from a text prompt. That's coming.

Go beyond English

Bilingual language models lost the throne in 2021. For the first time, a multilingual model beat them all at WMT. That was a big moment that surprised no one. Machine translation has caught up with the multi-task trend seen across the rest of NLP. Learning one task often helps a model do others, because no task in NLP is truly orthogonal to the rest.

The bigger deal is the explosion in non-English NLP. Chinese is on top — no surprise given the massive investment. There are now multiple Chinese language models as big or bigger than GPT-3, including Yuan and WuDao. But we also have a Korean language model, along with a Korean version of GLUE (benchmark problems notwithstanding). And most exciting of all is an entirely new approach to multilingual NLP called XLS-R trained on a massive collection of speech recordings in 128 languages. This could become the foundation NLP model for low-resource languages.

Build responsibly

If 2020 was the year that NLP ethics blew up, then 2021 was the year that ethics considerations became not just normal but expected. NeurIPS led the way with ethics guidelines and a call on authors to include a "broader impact" section in their papers.

But like the rest of the AI industry, NLP is still self-regulating. That will change. The most significant action in AI self-regulation last year was Facebook's voluntary deletion of its facial recognition data. That move was surely made in anticipation of government regulation and liability. (They also have more than enough surveillance data on their customers to power their targeted advertising.)

Where will the rubber meet the road for NLP regulation? AI companies that work closely with governments will lead the way, by necessity. Here at Primer, we build NLP systems for the US, UK, and Australian governments, helping with tasks as diverse as briefing report generation and disinformation detection. This front-row seat to the NLP revolution is humbling.

One thing is now clear. The machine learning tools that we are building today to process our language will transform our society. An intense research effort is underway to spot potential biases, harms, and mitigations. But every voice of hope and concern for this technology must be heard. Building NLP responsibly is our collective duty.

Read more: The Business Implications of Machines that Read and Write

Primer Enterprise

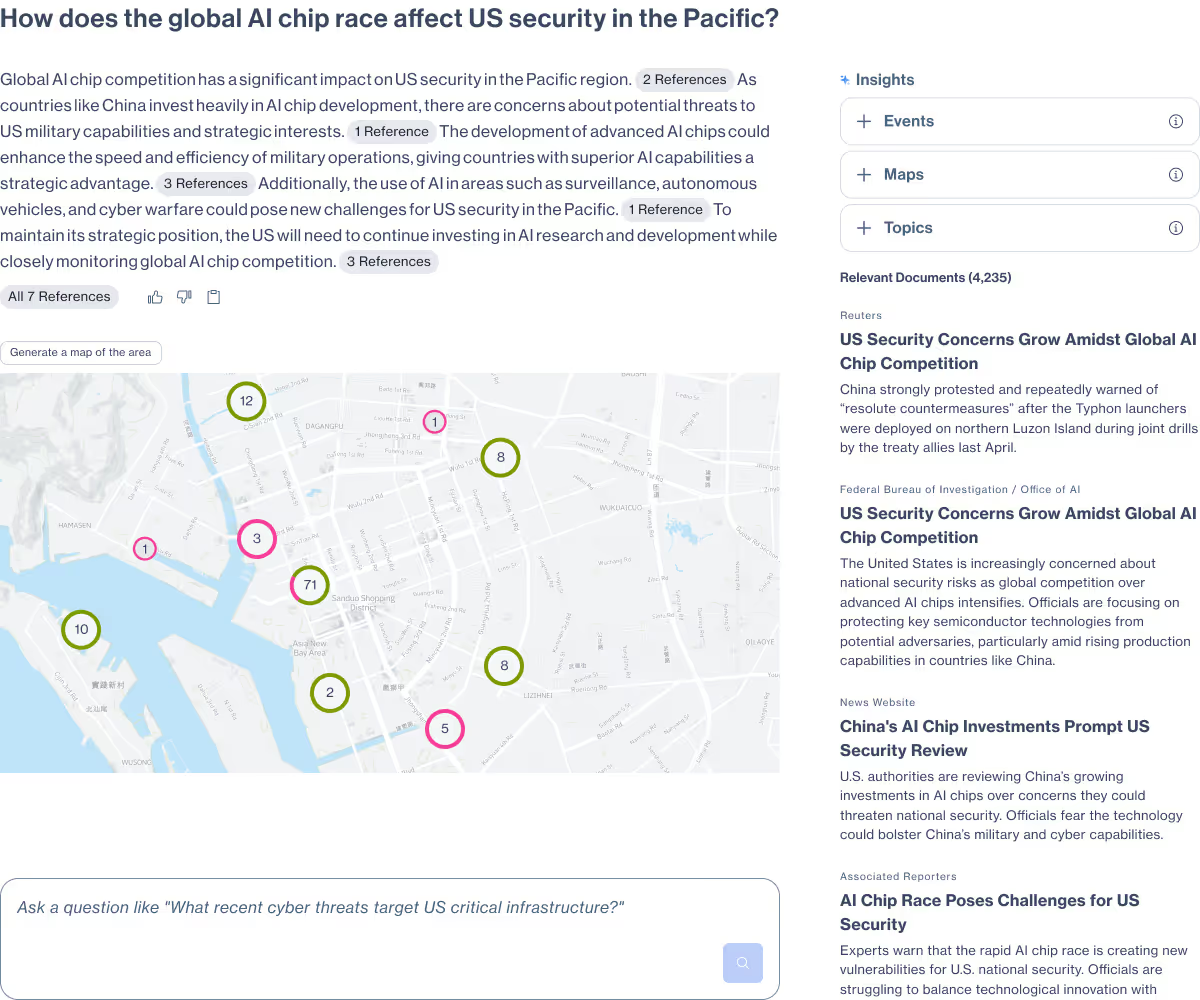

Informed, defensible analysis

Primer Enterprise is a secure AI platform that helps analysts and mission teams across the Intelligence Community, Defense, and Civilian agencies analyze massive volumes of unstructured data. It transforms fragmented reports, proprietary data, and open-source information into structured, traceable insight that supports faster, defensible decision-making.

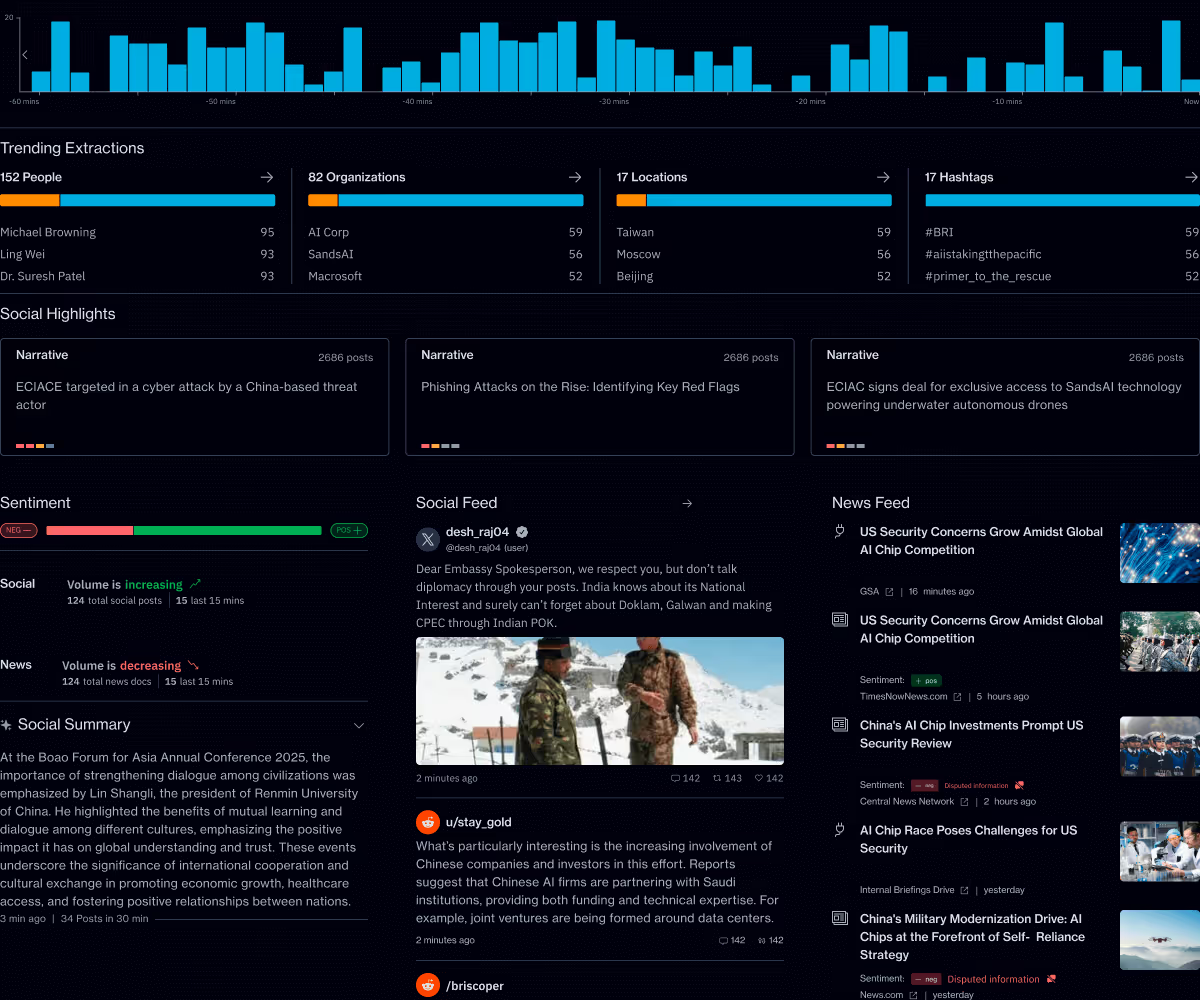

Primer Command

Real-time operational clarity

Primer Command is an AI-powered monitoring platform that helps mission teams keep track of narratives, track evolving topics, and detect emerging threats across global news and social media. It provides real-time visibility into the information environment so leaders can understand events as they unfold.