Say Goodbye to Annotation Lead Times

Applied natural language processing (NLP) is all about putting your domain expertise into an infinitely scalable box. Expertise is expensive, and engaging a fleet of experts to trawl through the deluge of text that comes into your systems every day is cost prohibitive. Even if money were no object, it would be challenging to find, recruit, and manage the sheer number of experts needed to effectively deal with an ongoing torrent of new information.

What if you could bring your expertise to bear on only a few examples and then hand them off to a machine to analyze? That’s the promise of NLP. You put your domain expertise in a machine readable format by annotating data, and the NLP algorithms will turn that data into a box that can understand not only what you’ve shown it, but also generalize to understand previously unseen words, phrases, and concepts. Because it’s a machine, not a human, you can scale your new box infinitely.

Labeling is laborious

The one snag is that you’ll need to label data to get your expertise into a machine readable format. No one likes to label data. It costs money and takes time. It’s hard to do. It’s tedious and monotonous. Unfortunately, when most people realize how laborious annotation is, they try to minimize the amount of annotation they need to do instead of minimizing the time they spend labeling. Not only do most “cost saving” workarounds end up costing more, they also reduce the quality of the data that is produced.

Multitasking adds exponential risk

The number one failure point in annotation projects is asking annotators to do multiple things at once. The logic behind this mistake seems sound at first glance. “I’m paying these folks by the hour, so why would I have them look at the same document three times, once for each task I need them to do? I can have them look at the document once and do three things instead.”

But, this unfortunate line of thinking overlooks a whole host of subtleties. Annotation throughput is only important when annotation quality is satisfactorily high. Remember the adage: “Garbage in, garbage out.” You want your annotated examples to reflect the full extent of your expertise—gold, not garbage, in that scalable box.

The x-factor of cognitive load

Annotation quality is heavily influenced by the cognitive load placed on the annotator. If you ask someone to make three decisions instead of one, that’s 3x the cognitive load on that person. Not only does this make every decision slower, but it also increases the probability of error with every decision, thus lowering the throughput and quality of the entire annotation endeavor.

Touch time vs. lead time

Another source of this misguided tendency towards multitasking stems from a project manager’s inability to distinguish between lead time and touch time. Touch time measures the time it takes to make a single annotation. For example: how long does it take to find a word and double click on it? Maybe 500 milliseconds.

Lead time, on the other hand, measures the time it takes for the annotator to be ready to begin annotation work. For example, how long does it take for the source document to load? It could take several more minutes if the annotator needs to first call their manager and ask what to work on. Stepping back even further, lead time also includes the time it takes for a project manager to plan the work for a team of annotators and build a work schedule. This could be days or weeks if the manager is busy with other higher priority tasks, out sick, or on vacation.

Goodbye lead times

Long lead times might be something that your organization is used to (and thus expect), but unlike death and taxes, you can actually avoid them. In fact, LightTag makes lead times go away. In annotation workflows, the primary source of lead time is coordinating work — i.e., the time it takes for an annotator to discover what is the next unit of work they need to complete, fetching that unit of work, and reading any necessary instructions. LightTag brings lead time down to milliseconds by fully automating the allocation of work amongst a pool of annotators and embedding instructions within the labeling interface. In that way, the average lead time in LightTag is measured in milliseconds, not minutes.

Case study: Drug-drug interaction annotation

Let’s look at a case study. A major pharmaceutical company wanted to build a corpus of drug–drug interactions (DDIs). Given a paragraph mentioning two or more drugs, the task was to extract the pairs of drugs that were said to be interacting (e.g., don’t mix Xanax and Alcohol).

Annotating DDI references would seem to require a team of highly paid pharmacologists to pore through thousands of medical articles, highlight the all mentions of drugs, and then somehow connect each pair of interacting drugs — a slow and expensive process indeed.

LightTag’s UX and project management capabilities reduced both lead time and touch time to the point where our customer was able to outsource the annotation work and use their internal domain experts only for spot checking the final result.

How did it work? LightTag minimized the project’s lead time by pre-annotating individual drugs and displaying pairs of highlighted drugs at a time. This lowered the cognitive load on the annotator, allowing them to work faster and with less risk of error.

Traditionally, relationship annotation is done via a drag-and-drop interface where annotators manually form connections between entities. LightTag further reduced touch time by changing that paradigm. Since pairs of entities were pre-annotated, the annotator needed to only make a single click to classify if and how a pair was related, instead of the multiple point-and-click steps they’d need to take in a traditional setup.

LightTag also minimized lead time by allowing our customer to outsource the annotation work. They could define the work to be done in bulk and automatically distribute the work to annotators in small batches. This meant that the project management team did not need to spend time during the course of the project on work allocation, nor did annotators have to wait for work to be assigned to them. The end result: what used to take months, took only a few days.

Today, LightTag is available both as a standalone SaaS or on-premises offering for those seeking an annotation solution only. LightTag capabilities are also available as part of the Primer platform where it integrates with model training, data ingestion, and production QA for NLP workflows.

To get hands on with LightTag, try it for free today.

Primer Enterprise

Informed, defensible analysis

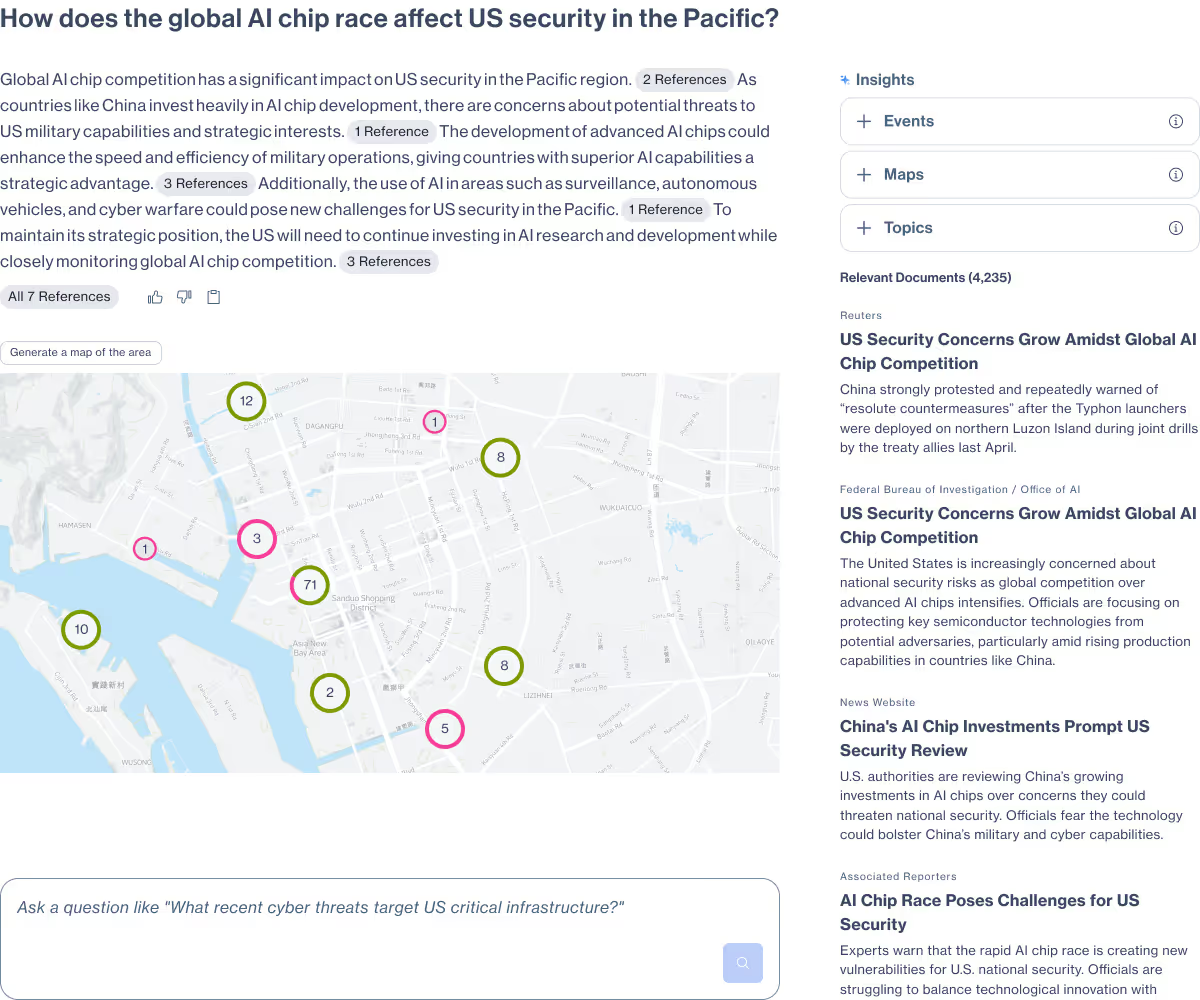

Primer Enterprise is a secure AI platform that helps analysts and mission teams across the Intelligence Community, Defense, and Civilian agencies analyze massive volumes of unstructured data. It transforms fragmented reports, proprietary data, and open-source information into structured, traceable insight that supports faster, defensible decision-making.

Primer Command

Real-time operational clarity

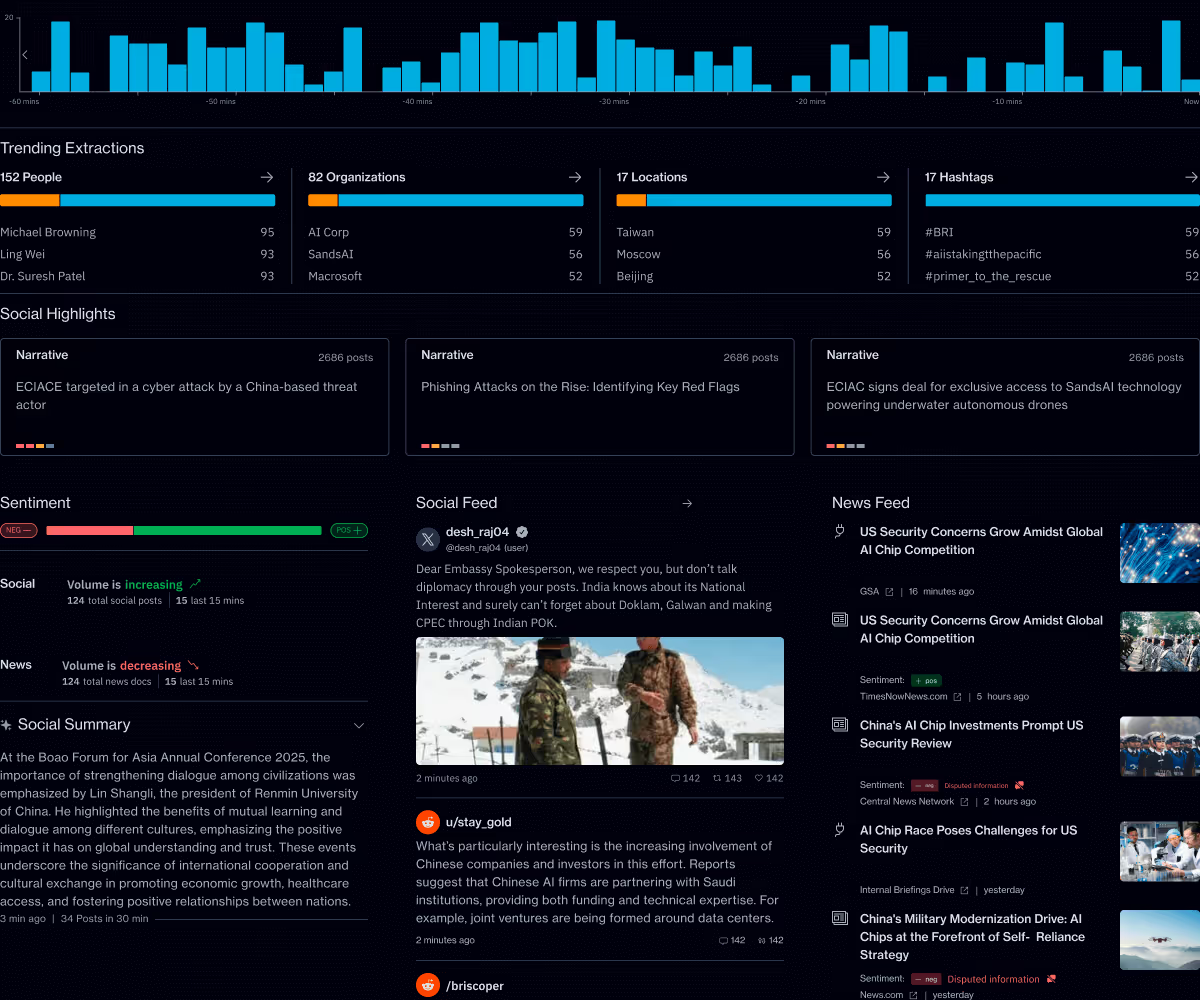

Primer Command is an AI-powered monitoring platform that helps mission teams keep track of narratives, track evolving topics, and detect emerging threats across global news and social media. It provides real-time visibility into the information environment so leaders can understand events as they unfold.