To Counter AI-powered Disinformation, We Need to Empower our National Security Analysts with AI

What are the biggest developments in artificial intelligence and machine learning that will impact national security? Why is disinformation so hard to counter? Brian Raymond, Vice President of Government at Primer discussed these questions and others with Terry Pattar from the Janes World of Intelligence podcast. Check out our podcast recap for the key takeaways from this lively discussion and check out the full podcast here.

This past week, Primer VP Brian Raymond met with Terry Pattar, head of the Janes Intelligence Unit, to talk about the trends and challenges he’s seeing at the nexus of machine learning and national security. During the conversation, Brian and Terry delved deep into the institutional and technical challenges faced by the US and its allies in countering disinformation, and ways to overcome these. For background, misinformation refers to false or out-of-context information that is presented as fact regardless of an intent to deceive. Disinformation is generally considered more problematic because it is false information that is designed to manipulate perceptions, inflict harm, influence politics, and intensify social conflict.

Disinformation spreads like wildfire

With emerging machine learning technologies, it’s become increasingly easy for our adversaries to spread sophisticated disinformation to advance their strategic objectives and undermine our democratic institutions. As the cost of spreading disinformation has plummeted; our adversaries have proven to wield this weapon efficiently and at scale. It is an effective asymmetric tool against the West because it actively undermines a shared understanding and truth, and contrary to our societal underpinnings, our adversaries take no issue with putting the full force of the state behind it.

“It is orders of magnitude cheaper to pollute the information environment with falsehoods than it is to find whatever has been put into the information environment that’s polluting it and to counter it.”

– Brian Raymond

Detection is expected to get increasingly difficult

Over the past several years, detecting botnets creating algorithmically generated disinformation relied on “tells” in the text content. However, last June, the AI research lab OpenAI trained a large parameter, deep learning model called GPT-3 capable of generating text as fluently as a human, ranging from tweets to long-form articles. While GPT-3 has not been released to the public, a research consortium is working to release an open source version called GPT-NEO, steadily releasing increasingly powerful versions of this model. GPT-NEO is but the first of a wave of powerful open source “transformer” language models that can be easily deployed to generate effectively limitless streams of high quality synthetic text on demand.

“And so that’s why getting into the message of what’s being conveyed and understanding it from a natural language understanding perspective is going to be absolutely critical because they won’t have tells that you can pick up on.”

– Brian Raymond

Sidestepping this problem, Primer’s Natural Language Processing (NLP) Platform can automatically build a sourced and referenced knowledge base of events, entities and relationships, to understand new claims that are catching hold, which groups are pushing them, and what audiences are receptive to. This additional context can provide early warnings and a means to constantly surveil the information manipulation landscape and better position operators to quickly contrast the claims that are being made against a ground truth knowledge base. (See this WIRED article or this Defense One article for more details about how Primer is supporting US national security efforts to counter disinformation.)

We solved it once, we can solve it again

Disinformation in and of itself, as well as the societal discord it can sow, is nothing new. It has been a national security concern for decades; the Cold War was largely waged by propagating competing versions of the truth. In the early 1980s, the Active Measures Working Group was established in the US to identify and counter Soviet propaganda. The effort was effective, bringing Mikhail Gorbachev to the table who subsequently ordered the KGB to scale down their disinformation efforts. After the fall of the Soviet Union, that playbook was shelved until Russia annexed Crimea in 2014. Unfortunately though the world has changed and that same template won’t work today. Information moves at real-time speed, and is far more diffuse today than the old-school playbook. Counter disinformation today requires an entirely different toolkit.

We can’t hire ourselves out of this problem

One study that shows the extent of this challenge was published by TechCrunch, which looked at the volume a typical intelligence analyst covers on a daily basis. The study found that in 1995 that analyst would have to read about 20,000 words a day to stay aware. And by 2015, that had increased tenfold. By 2025, they expected that to be in the millions of words per day. Likewise, a study by IDC titled Data Age 2025, predicted that worldwide data creation will grow to 163 zettabytes by 2025; ten times the amount of data produced in 2017.

The defense and intelligence community recognizes that it can’t hire its way out of this problem. This is why it’s so critical to pair operators and analysts with algorithms to accelerate rote work and to help them uncover connections and insights buried in the data. Analysts can use Primer’s products to automate the organization of that intelligence into knowledge bases that cluster, curate, and organize reporting into specific areas of interest. These knowledge bases can be further automated to continuously analyze and self-update with new intelligence reports.

This enables them to spend less time on rote, manual tasks and instead spend more time getting at the why, so what, and what next type questions. The analyst can then be positioned to deliver a deep, rich brief that serves the policymakers’ needs.

Bridging public and private partnerships is key to any solution

What we need to do now is step back and take a fresh look at how to counter disinformation. To seize the advantage in this asymmetric space, we need a comprehensive approach: we need both the US, its allies, and the private sector to come together. The Special Operations Community, the US Air Force, and the intelligence and defense communities more broadly have recognized this and have made big investments in data, data curation, infrastructure like a classified clouds, and the NLP applications to process the enormous volumes of data each day across units, organizations, services, and allied partners.

On the technology side, Primer has been steadily developing not only NLP Engines that perform at levels of a human analyst but entire machine learning pipelines that nest within analyst and operator workflows. We have developed a no-code AI model labeling, training, and deployment platform that can be run by operators on the frontlines confronting disinformation and other challenges every day. Operators can now encode their tacit knowledge within the machine and essentially teach it to understand their world; no technical skills needed.

Primer is effectively reducing the friction to using AI in the operational context. With a shared infrastructure to share large volumes of data, the US and its allies will have the tools to rapidly build and retrain best-in-class NLP models to support tactical efforts.

“I think that’s going to be the big tipping point here, [..] that when we make it easy enough and fast enough for the folks that are on the front lines to encode their tacit knowledge into these models and use them and lower those costs, that’s when we’re going to see a paradigm shift and really how we’re pairing algorithms with analysts and operators.”

– Brian Raymond

There is an eagerness for AI-enabled efforts

It’s clear that there is an appetite and high-level support across the intelligence and defense services to get their analysts and operators the AI-enabled and AI-driven tools they need to confront these challenges. Significant organizational issues have yet to be overcome to hit the ground running, but once the infrastructure is in place we will see the adoption and integration of AI solutions accelerate and proliferate across a range of national security applications. Investments in these areas will become more urgent as near peer and other geostrategic competitors exploit asymmetric strategies to weaken the West and advance their foreign policy objectives.

Learn More

To learn more about Primer’s technology, download the AI Technology report or contact us to discuss your specific needs.

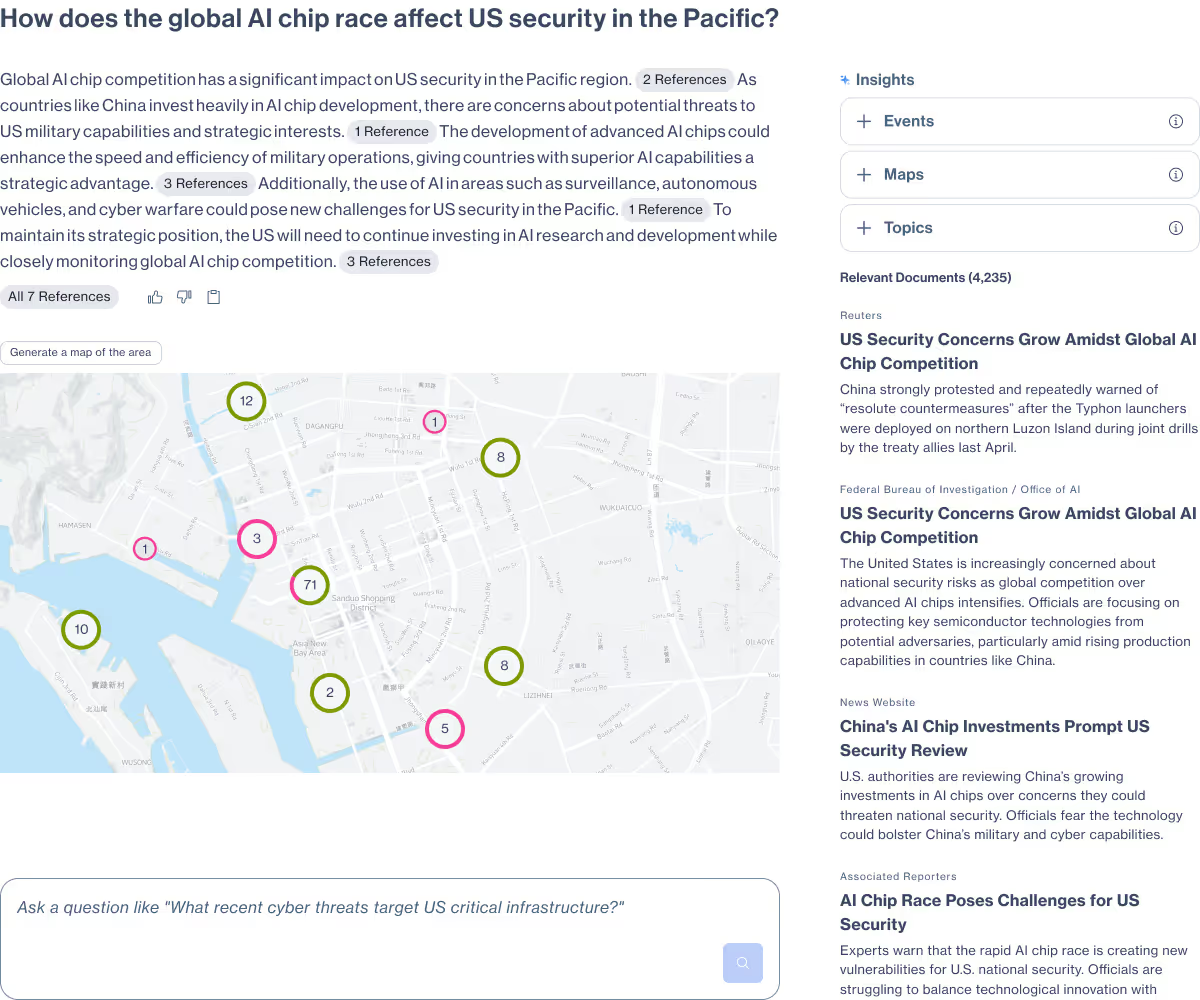

Primer Enterprise

Informed, defensible analysis

Primer Enterprise is a secure AI platform that helps analysts and mission teams across the Intelligence Community, Defense, and Civilian agencies analyze massive volumes of unstructured data. It transforms fragmented reports, proprietary data, and open-source information into structured, traceable insight that supports faster, defensible decision-making.

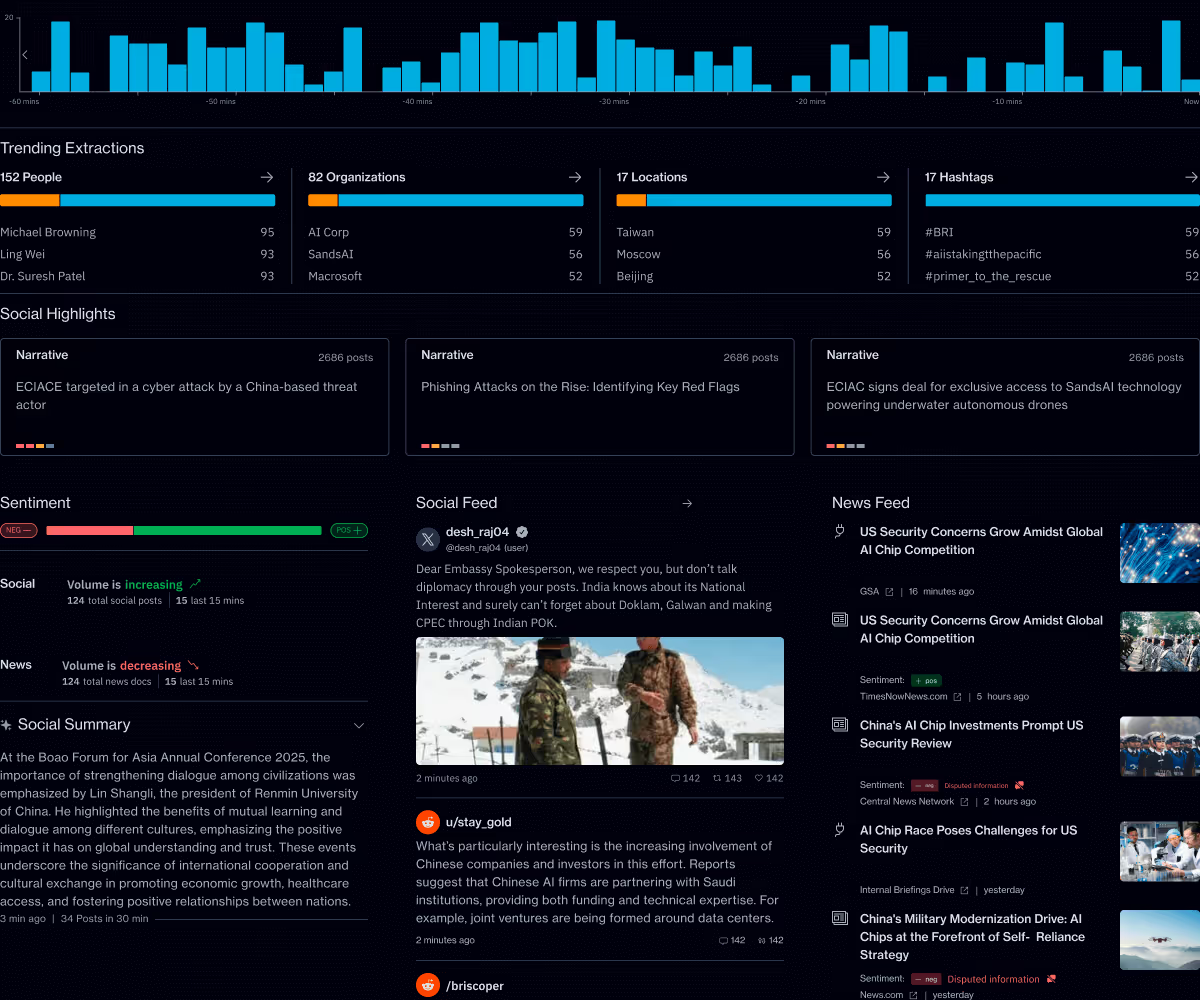

Primer Command

Real-time operational clarity

Primer Command is an AI-powered monitoring platform that helps mission teams keep track of narratives, track evolving topics, and detect emerging threats across global news and social media. It provides real-time visibility into the information environment so leaders can understand events as they unfold.