How Emerging AI Technologies Can Help Us Think 'Smarter'

Every morning, analysts, operators, and policymakers arrive at their desks to read the latest news and intelligence reporting that has come in during the past day. The daily rhythm of “reading the morning traffic” has remained largely unchanged since the 1950s. Professionals in the intelligence community and military spend upwards of a third of their days poring over incoming cables to stitch together a coherent picture of worldwide events. Policymakers and senior military commanders increasingly struggle to consume the enormous volume of daily reporting and rely on analysts and operators to deliver tailored intelligence briefings to help them keep up.

The 2017 London Bridge attack served as a reminder that national security professionals both in the U.S. and in partner countries are facing an information overload in the context of modern intelligence collection. In the 48 hours following the attacks, intelligence analysts were confronted with more than 6,600 news articles on the attack, as well as tens of thousands of YouTube videos, tweets, and other social media postings. “The good news is we’ve got lots of information, but the bad news is we’ve got lots of information,” said Philip Davies, director of the Brunel Centre for Intelligence and Security Studies, in the wake of the attacks. “I think we’re going to have to be realistic that MI5 and SIS (MI6) are being confronted with information overload in terms of scale and complexity. We have been cutting national analytical capability for 20 years. The collection of information has increased but if you cut back on analysis you get overload.”

Advances in machine learning and computer processing in recent years have triggered a wave of new technologies that aid in processing and interpreting images, video, speech, and text. To date, the technological developments in the realms of computer vision and voice recognition have been most impactful, finding wide-ranging applications from helping drivers avoid accidents to powering digital assistants to recognition of key objects and people for reconnaissance purposes. However, advances in neural networks pioneered in the image processing domain have helped make possible new AI technology capable of comprehending the unstructured language common in everyday life. This technology, known as Natural Language Processing (NLP), has reached a level of maturity that it is now finding far ranging applications across the national security community.

NLP broadly refers to the set of technologies that enable computers to not only understand, but also generate language in human-readable format. Driven by multi-layer neural networks, machine learning algorithms are now capable of a range of functions long the exclusive domain of humans, including drafting newspaper articles, summarizing large bodies of text data (e.g. military after-action reports), and identifying a wide range of entities, including people, places, events, and organizations. Moreover, NLP can now understand the relationship among these entities, rapidly extracting and collating key information from thousands of documents such as the number of casualties from a bombing, the political affiliation of an organization, or the type of illness afflicting a political leader. Perhaps most impactful, not only can these algorithms detect when a person is mentioned, but they can then gather all of the relevant information about them across a large set of reporting, thus creating profiles on the fly. These capabilities are changing the paradigm for how national security professionals not only manage daily traffic, but also information flows in times of crisis.

NLP to accelerate work of operators in the field

NLP is poised to deliver enormous time savings for media exploitation efforts by the special forces and intelligence communities. Over the past two decades, these organizations have perfected the exploitation phase of the targeting cycle, but few technologies have emerged that accelerate the analysis and dissemination phases.

This challenge is becoming more urgent as the amount of video, audio, and textual data being pulled off the battlefield skyrockets. General Raymond Thomas, Commander of U.S. Special Operations Command (SOCOM), disclosed last year at the GEOINT Symposium that SOCOM collected 127 terabytes of data from captured enemy material alone in 2017, not including live video from drones. “Every year that increases,” he said. By comparison, the Osama Bin Laden raid in 2011 resulted in 2.7 terabytes of data.”

The emerging ability to train new NLP algorithms on the fly is beginning to make the leap from the private to public sector and is just now beginning to find uses among national security professionals. These technologies will enable these organizations to rapidly sift through staggeringly-large caches of digital media to unearth files most likely to be of intelligence value.

Introduction of these technologies will upend the longstanding approach of manual review by large teams of analysts and operators by enabling this work to be pushed down to small tactical teams in the field, dramatically accelerating their targeting cycles.

Inflection point with NLP for intelligence analysts

NLP technologies also will dramatically augment analysts’ ability to grapple with large volumes of news and intelligence reporting. This issue is becoming more acute as intelligence community (IC) leaders race to establish new IT platforms to facilitate rapid information sharing and collaboration. The establishment of Amazon’s C2S cloud environment for the CIA, the DNI’s push for the IC IT Enterprise, and the Pentagon’s looming $10B Joint Enterprise Defense Infrastructure (JEDI) cloud program are indicative of the broader trend toward deeper integrationacross the 17 IC agencies and the growing recognition that IC’s future success is in large part tied to its ability to capitalize on emerging AI technologies. While fewer stovepipes will help ensure the right intelligence makes its way to the right analyst, operator, or policymaker at the right time, the additional intelligence will exacerbate the information overload these groups already grapple with.

NLP technologies are also eroding the tradeoff analysts historically have had to make between making timely judgments and judgments based on a comprehensive analysis of available intelligence. These technologies are enabling analysts to read-in each morning in a fraction of thetime, and interact with all of the reports hitting their inboxes each day, not just those flagged as highest priority or from the most prominent press outlets. The effect of these algorithms goes beyond accelerating the speed and scale that individual analysts can operate, to also mitigating hitherto unavoidable analytic biases associated with source bias. This is lowering the cost analysts face for pursuing hunches, exploring new angles to vexing issues, and creating time for them to learn about new issues.

For example, it’s now much less costly for an analyst covering Syria to leverage NLP to read-in on how the Russian press is covering the fighting in that country, from documents originally written in Russian. These same, NLP technologies also are enabling policymakers and commanders to engage more immediately with reports in real time, through automatic summarization and the ability to surface key facts in near real time.

Self-updating knowledge bases will mark historic paradigm shift for analysts

Perhaps most transformative, emerging NLP technologies are showing promise in powering auto-generating and auto-updating knowledge bases (KBs). Although still in their infancy, these self-generating “wikipedias” likely will have the most dramatic impact by eliminating potentially millions of worker-hours of labor manually curating KBs such as spreadsheets, link charts, leadership profiles, and order-of-battle databases. These next-generation KBs will continuously analyze every new piece of intelligence reporting to automatically collate key facts about people, places, and organizations in easily discoverable and editable wiki-style pages. The introduction of this technology will disrupt the daily rhythm of tens of thousands of analysts and operators that spend a significant amount of time each day cataloging facts from intelligence reporting.

Self-generating and updating KBs will make it easy for intelligence and military organizations to answer the frequently asked but very difficult to answer question: tell me everything we know about person (or place or organization) X? Still today, answering this question is an enormously costly and time-intensive process fraught with pitfalls. Inexperience, imperfect organization, or lack of human resources make it impossible for organizations to fully leverage all of the data they already receive.

In the not-too-distant future, analysts likely will arrive each morning to review profiles created overnight by NLP engines. Rather than spending hours cataloging information in intelligence reports, they’ll simply review additions or edits automatically made to “living” databases, freeing them to work on higher-level questions—and minimize the risk of overlooking key intelligence.

See this article at The Cipher Brief

Primer Enterprise

Informed, defensible analysis

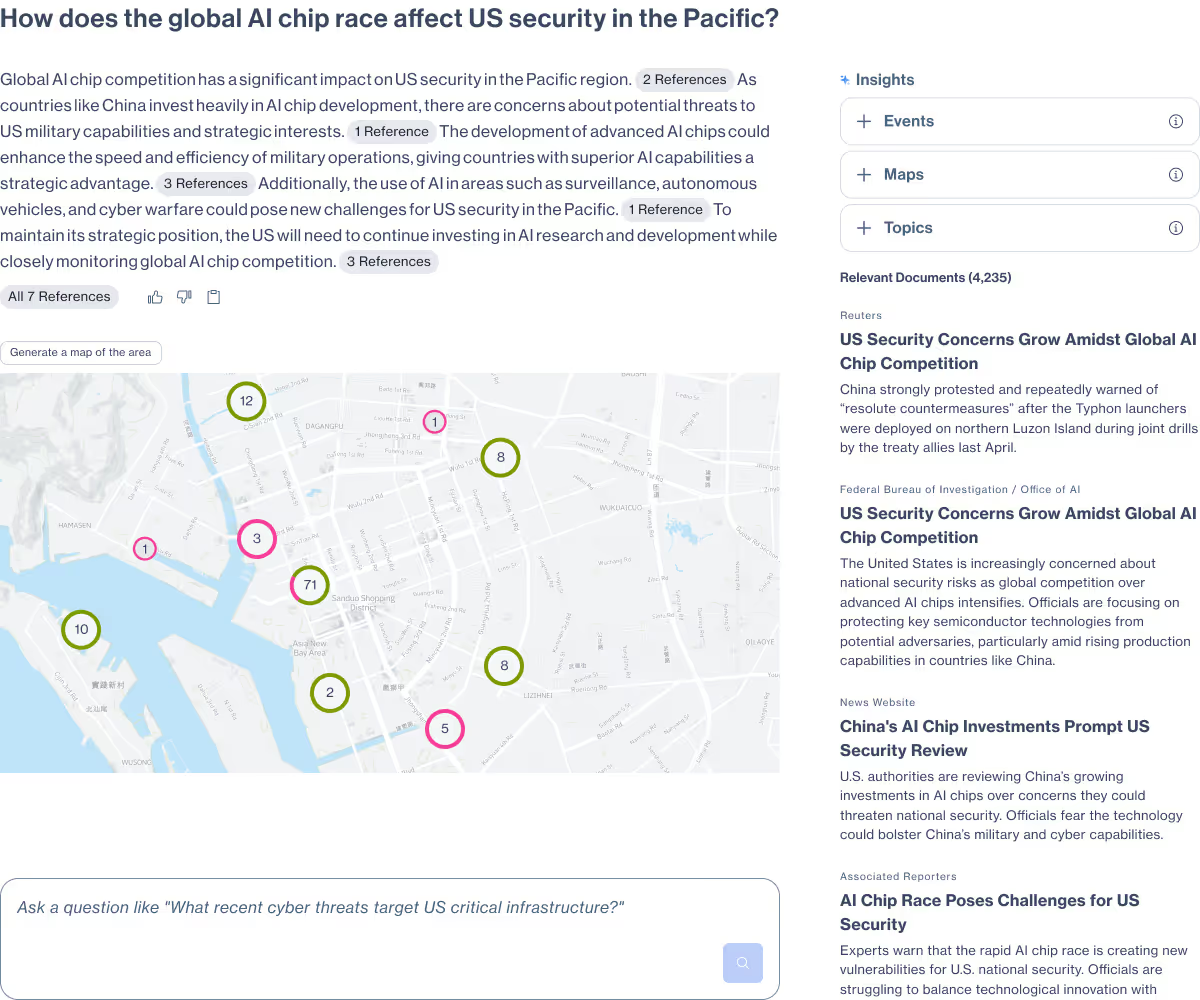

Primer Enterprise is a secure AI platform that helps analysts and mission teams across the Intelligence Community, Defense, and Civilian agencies analyze massive volumes of unstructured data. It transforms fragmented reports, proprietary data, and open-source information into structured, traceable insight that supports faster, defensible decision-making.

Primer Command

Real-time operational clarity

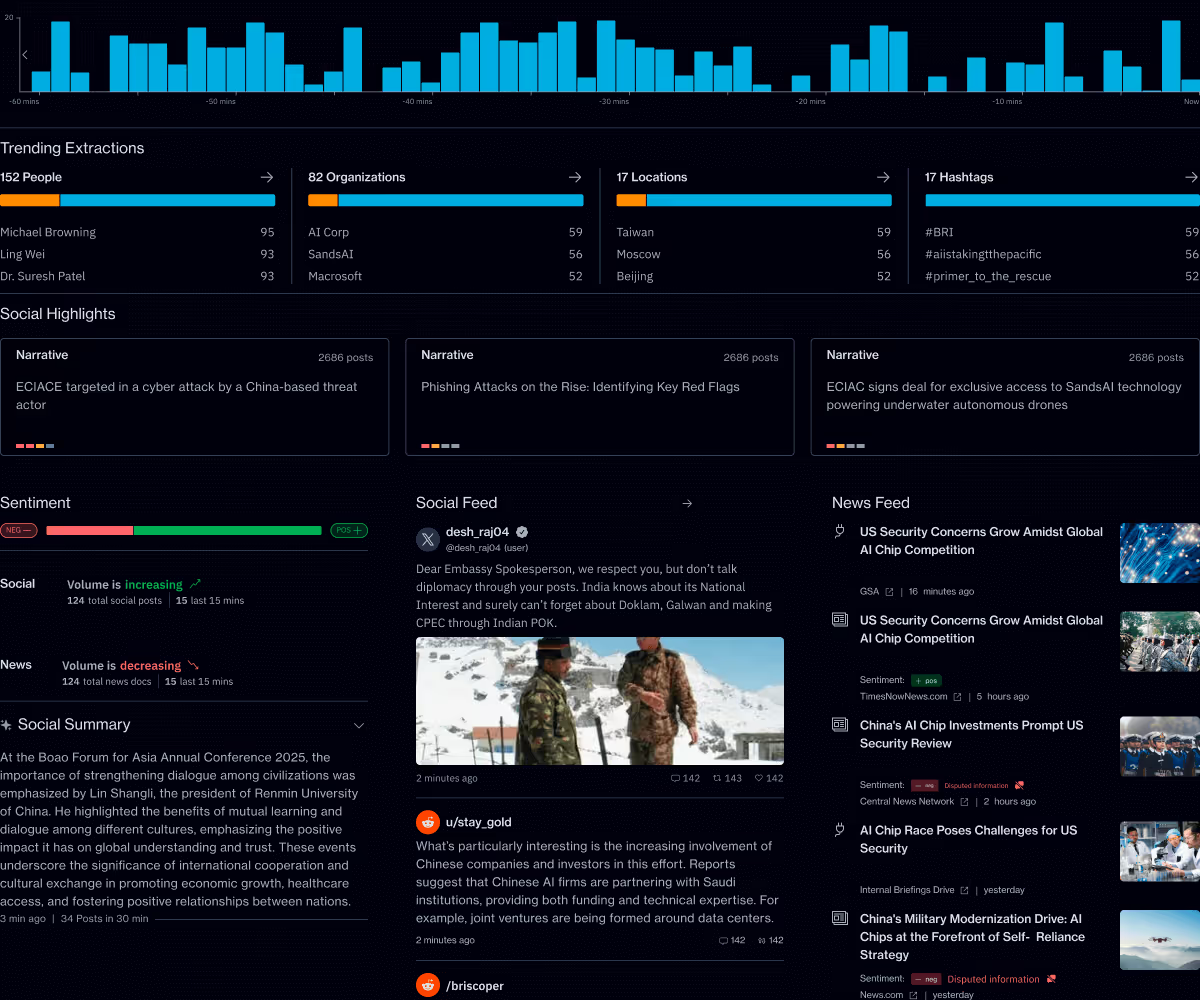

Primer Command is an AI-powered monitoring platform that helps mission teams keep track of narratives, track evolving topics, and detect emerging threats across global news and social media. It provides real-time visibility into the information environment so leaders can understand events as they unfold.