Two Products. One Mission

Primer offers two products, each built for distinct operational needs across the DoD mission set. Both are powered by the same trusted AI foundation and secure deployment posture.

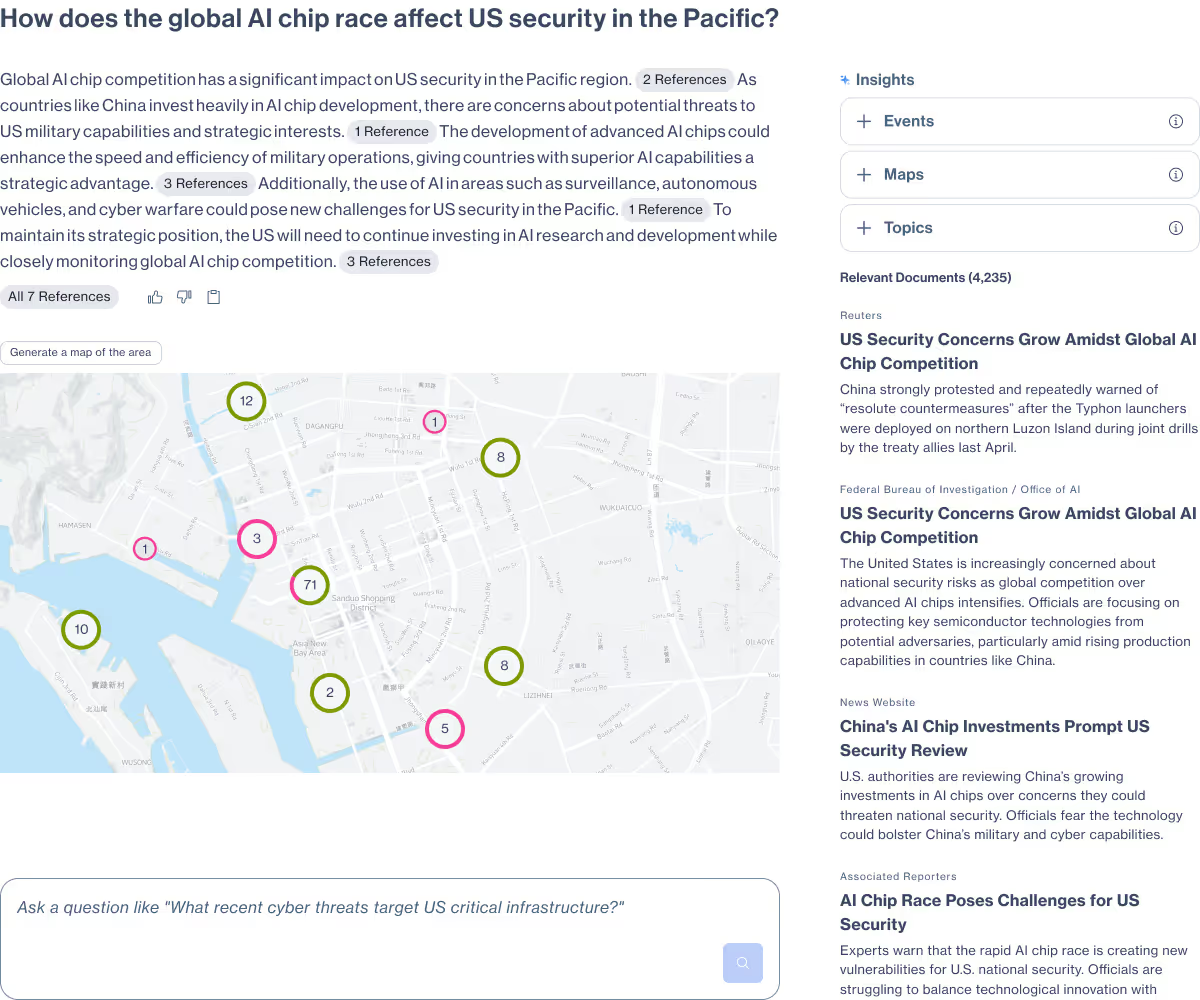

Primer Enterprise

Informed, defensible analysis

Primer Enterprise is a secure AI platform that helps analysts and mission teams across the Intelligence Community, Defense, and Civilian agencies analyze massive volumes of unstructured data. It transforms fragmented reports, proprietary data, and open-source information into structured, traceable insight that supports faster, defensible decision-making.

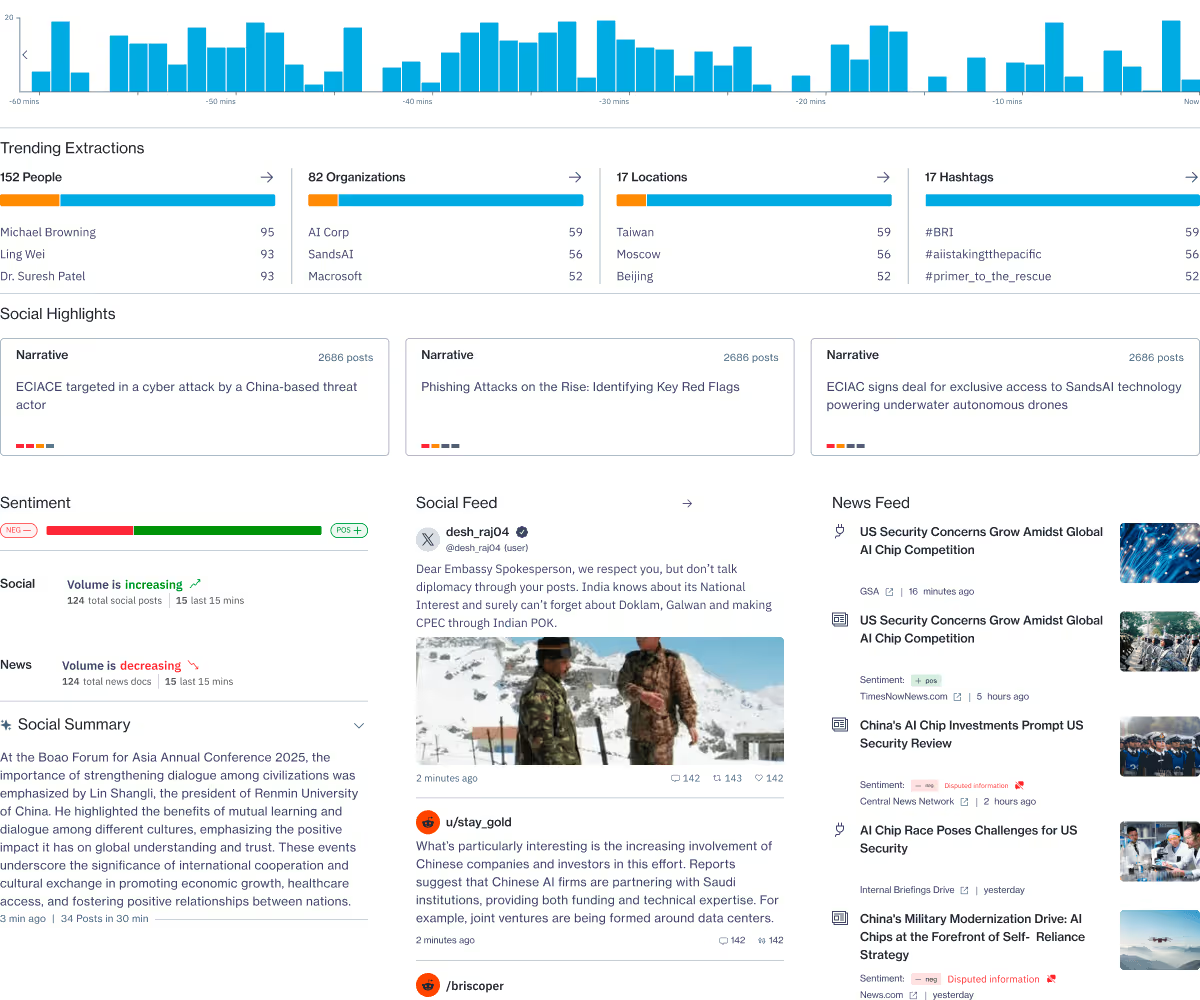

Primer Command

Real-time operational clarity

Primer Command is an AI-powered monitoring platform that helps mission teams keep track of narratives, track evolving topics, and detect emerging threats across global news and social media. It provides real-time visibility into the information environment so leaders can understand events as they unfold.

Security compliance and certifications